Most developers treat PostgreSQL like a black box that occasionally surprises them — tables that grow when they should shrink, queries that crawl after a batch job, autovacuum settings copy-pasted from a Stack Overflow answer nobody understands. The surprises stop being surprises once you can see how rows are actually stored, versioned, and reclaimed. This post walks the storage model from the byte level up so the tuning knobs become obvious.

TL;DR

- Every

UPDATEin Postgres is really anINSERT + DELETE— old row versions stay on disk until VACUUM reclaims them.- Tuples carry

xmin/xmaxtransaction IDs so MVCC can decide visibility without locks.- HOT updates avoid index churn when the new row fits the same page and no indexed column changed — aim for >80% HOT on hot OLTP tables.

- Bloat is the normal price of MVCC; measure it with

pgstattuple/pgstatindexand tune autovacuum per-table, not globally.- VACUUM does three jobs: reclaim space, freeze old XIDs, and update the visibility map —

VACUUM FULLis an outage, not maintenance.- Transaction ID wraparound is a hard outage if autovacuum loses the race; monitor

age(datfrozenxid)and alarm at 40-50%.- Long-running transactions and abandoned replication slots pin the xmin horizon and are the most common bloat root cause.

The One Sentence Most Developers Don’t Know

In PostgreSQL, UPDATE is really INSERT + DELETE.

That single fact explains almost every surprising thing Postgres does. It explains why your table keeps growing after a batch job that only modifies rows. It explains why VACUUM exists at all. It explains why a table with 10 million live rows can occupy space on disk that would comfortably hold 40 million. It explains why autovacuum_vacuum_scale_factor shows up in every tuning guide and why nobody agrees on what to set it to.

The existing Postgres performance tuning post on this site is a list of levers to pull. This post is the “why” — the storage model, the visibility rules, and the maintenance loop that those levers are actually tuning. If you have ever stared at a query plan and wondered why Postgres scanned 4 GB to return 12 rows from a tiny table, the answer is in here.

Target audience: senior backend engineers who already know that an index can help a WHERE clause and want to understand the machinery underneath.

Pages, Tuples, and the ctid Address

Postgres stores tables as a sequence of fixed-size pages, 8 KB by default. Every heap relation on disk is just a file — or set of files, one per gigabyte — full of 8 KB pages numbered from zero.

Inside a page, rows are called tuples. A tuple is not just your column data. It is:

- A 23-byte

HeapTupleHeaderDataheader - An optional null bitmap

- The column values, laid out according to the table’s attribute order and alignment rules

The header is where the interesting fields live:

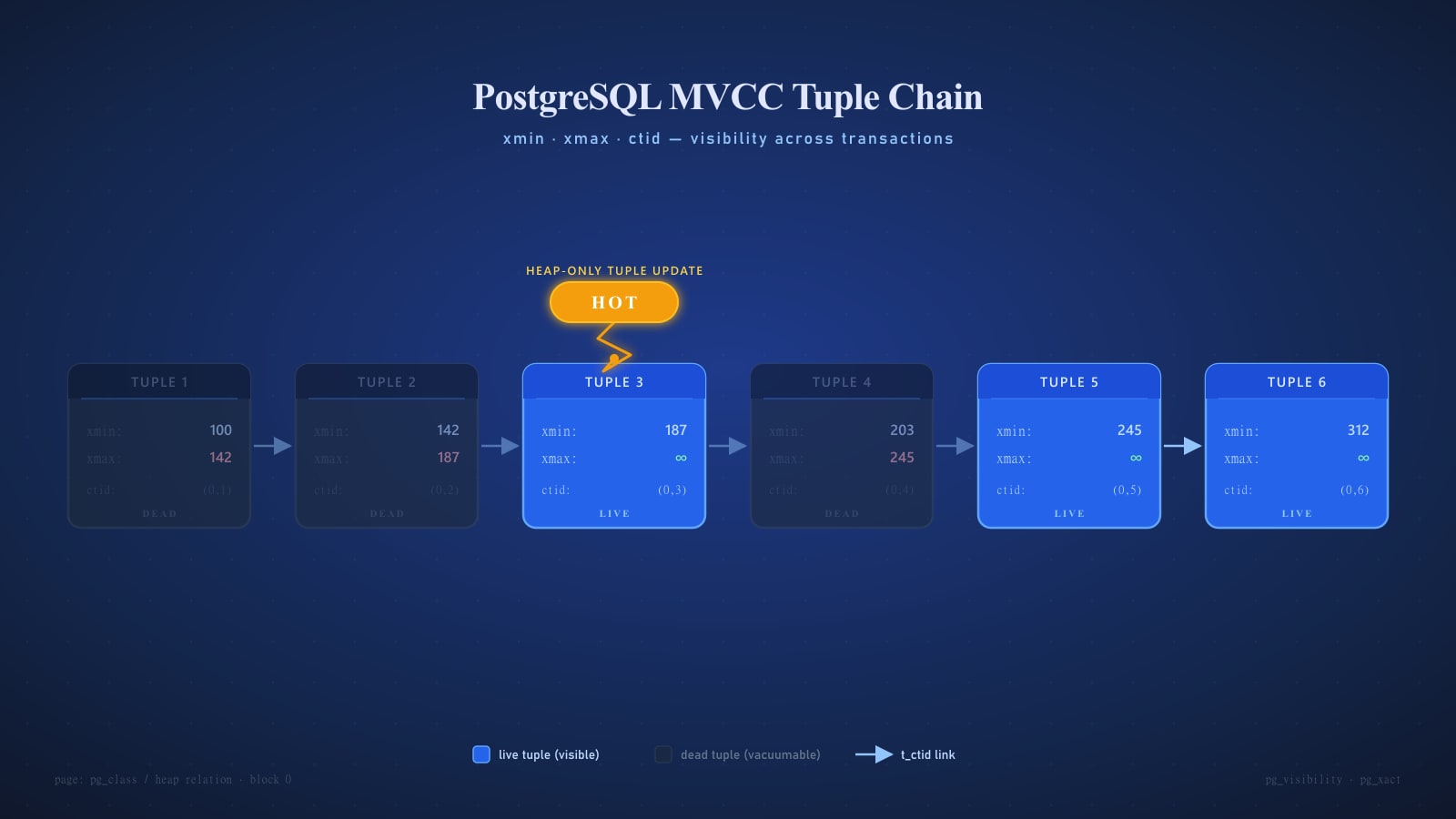

t_xmin— the transaction ID that inserted this tuplet_xmax— the transaction ID that deleted or updated it (zero if still live)t_cid— command ID within a transactiont_ctid— a physical pointer to the next version of this row, if anyt_infomask/t_infomask2— flag bits including hint bits, HOT status, freeze status, and null flags

Every row has an address called a ctid, written as (block, offset). Block 0, offset 3 is (0,3). It is the physical location inside the file. Indexes — for non-HOT entries — store the ctid of the row they point to. When the row moves to a new physical location, old index entries become stale and the new location needs a new entry.

That last paragraph is the entire reason HOT updates exist. More on that below.

MVCC Essentials

PostgreSQL uses Multi-Version Concurrency Control (MVCC) rather than the lock-everything-and-hope strategy that older databases used. The core idea is:

- Writers never block readers.

- Readers never block writers.

- Each transaction sees a consistent snapshot of the database as of some point in time.

The mechanism is exactly what the header suggests. Every tuple is stamped with the transaction that created it (xmin) and, when applicable, the transaction that removed it (xmax). A transaction running with snapshot S sees a tuple if and only if:

xminis committed and visible toS, andxmaxis zero, aborted, or not yet visible toS

An UPDATE is then conceptually:

- Mark the old tuple’s

xmax = current_xid. - Insert a new tuple with

xmin = current_xidandxmax = 0. - Point the old tuple’s

t_ctidat the new tuple.

Nothing is overwritten. The old version stays on disk, visible to anyone whose snapshot predates the update, until a vacuum reclaims it.

Seeing It Yourself

The best way to internalize this is to look. Postgres exposes ctid, xmin, and xmax as pseudo-columns on every table.

CREATE TABLE toy (id int PRIMARY KEY, note text);

INSERT INTO toy VALUES (1, 'original');

SELECT ctid, xmin, xmax, * FROM toy;

-- ctid | xmin | xmax | id | note

-- -------+------+------+----+----------

-- (0,1) | 742 | 0 | 1 | original

UPDATE toy SET note = 'first edit' WHERE id = 1;

SELECT ctid, xmin, xmax, * FROM toy;

-- ctid | xmin | xmax | id | note

-- -------+------+------+----+------------

-- (0,2) | 743 | 0 | 1 | first edit

UPDATE toy SET note = 'second edit' WHERE id = 1;

SELECT ctid, xmin, xmax, * FROM toy;

-- ctid | xmin | xmax | id | note

-- -------+------+------+----+-------------

-- (0,3) | 744 | 0 | 1 | second editOnly one live row is visible, but two dead versions are still sitting at (0,1) and (0,2). You can confirm that with the pageinspect extension:

CREATE EXTENSION IF NOT EXISTS pageinspect;

SELECT lp, t_xmin, t_xmax, t_ctid, t_infomask2

FROM heap_page_items(get_raw_page('toy', 0));

-- lp | t_xmin | t_xmax | t_ctid | t_infomask2

-- ----+--------+--------+--------+-------------

-- 1 | 742 | 743 | (0,2) | 16386

-- 2 | 743 | 744 | (0,3) | 40962

-- 3 | 744 | 0 | (0,3) | 32770Three item pointers. The first two have an xmax, meaning they were superseded. The t_ctid chain walks from old to new: (0,1) → (0,2) → (0,3). The 16386 and 40962 values in t_infomask2 are bit flags — the top bits say “this tuple was HOT updated” and “this is a heap-only tuple.” That is the next topic.

Why SELECTs Don’t Lock

A plain SELECT in Postgres takes no row-level locks. It does take a lightweight AccessShareLock on the table to block DROP, but nothing at the row level. Two transactions reading the same row concurrently are entirely independent.

How? Snapshot isolation. At statement start (for READ COMMITTED) or transaction start (for REPEATABLE READ), Postgres captures a snapshot consisting of:

xmin— the oldest transaction still runningxmax— the next transaction ID to be assignedxip[]— the list of in-progress transaction IDs between the two

When scanning, each tuple’s t_xmin and t_xmax are checked against that snapshot plus the commit log (clog). A tuple is visible if its creator committed before the snapshot was taken and its destroyer either doesn’t exist or committed after. No locks are needed, because the data itself carries enough information to decide visibility.

The corollary: a long-running transaction holds its snapshot open, and every row that gets updated or deleted during that transaction’s life must stick around — even if no other session will ever read it — until that transaction finishes. This is how a stuck reporting query can cause your production OLTP table to bloat by gigabytes. Postgres cannot clean up what might still be visible to somebody.

HOT: Heap-Only Tuple Updates

Back to that chain of dead tuples. Consider what happens to indexes when a row moves from (0,1) to (0,2). If every index entry points to (0,1), every index needs a new entry pointing to (0,2) — a write amplification disaster on tables with many indexes.

Postgres avoids this with the Heap-Only Tuple (HOT) optimization. A HOT update is an update where:

- The new row version fits on the same heap page as the old one.

- No indexed column has changed.

When both conditions hold, Postgres:

- Writes the new version on the same page.

- Chains the old tuple’s

t_ctidto the new one. - Marks the new tuple as heap-only (

HEAP_ONLY_TUPLE) and the old tuple as HOT updated (HEAP_HOT_UPDATED). - Does not touch any index.

Index lookups land on the old tuple, see the HOT flag, and follow the ctid chain to the live version. The indexes never needed to know the row moved.

The two conditions are what make HOT possible. If your update changes a column in any index — even an index you forgot about, even a partial index’s WHERE column — you lose HOT and pay the full cost: new heap tuple plus one new entry in every index.

You can monitor the ratio:

SELECT relname,

n_tup_upd,

n_tup_hot_upd,

round(100.0 * n_tup_hot_upd / NULLIF(n_tup_upd, 0), 1) AS hot_pct

FROM pg_stat_user_tables

ORDER BY n_tup_upd DESC

LIMIT 20;A healthy OLTP table doing in-place updates on non-indexed columns should be above 80% HOT. If a busy table is at 5%, it is because something in every update touches an index — often an updated_at column that somebody indexed for sort order. Dropping that index or choosing a different strategy is frequently the single biggest write-performance win available.

The Bloat Problem

Dead tuples accumulate. That is not a design flaw; it is the cost of letting readers and writers coexist without locks. It becomes a problem when dead tuples outnumber live ones, or when pages are so fragmented that a sequential scan reads mostly empty space.

There are two flavors of bloat:

- Table bloat — dead tuples in heap pages. A 1 GB table might have 200 MB of live data and 800 MB of dead space that no query will ever see again.

- Index bloat — stale index entries pointing to dead tuples, or dead leaf pages that B-tree rebalancing left orphaned. B-trees never shrink on their own; they only get wider.

The pgstattuple extension measures bloat authoritatively — at the cost of scanning the whole table:

CREATE EXTENSION IF NOT EXISTS pgstattuple;

SELECT * FROM pgstattuple('orders');

-- table_len | 1073741824

-- tuple_count | 4821305

-- tuple_len | 689432716

-- tuple_percent | 64.21

-- dead_tuple_count | 1842910

-- dead_tuple_len | 263489520

-- dead_tuple_percent| 24.54

-- free_space | 88901632

-- free_percent | 8.28

SELECT * FROM pgstattuple_approx('orders'); -- sampled, much fasterApproximately a quarter of this table is dead weight. That is room for improvement but not an emergency. At 50%+ dead it becomes an emergency: every sequential scan reads twice the I/O it should, the buffer cache churns, and query plans quietly degrade.

For indexes, pgstatindex tells you the equivalent story:

SELECT * FROM pgstatindex('orders_customer_id_idx');

-- version | 4

-- tree_level | 3

-- index_size | 184549376

-- root_block_no | 412

-- internal_pages | 487

-- leaf_pages | 21941

-- empty_pages | 0

-- deleted_pages | 6104

-- avg_leaf_density | 52.11

-- leaf_fragmentation | 18.3avg_leaf_density well under the 90%-ish target plus a high deleted_pages count means this index has been churning and never rebuilt.

VACUUM: What It Actually Does

VACUUM has three jobs, and understanding which one you care about is half of tuning it.

1. Reclaim space from dead tuples.

For each dead tuple whose xmax is older than every current snapshot, VACUUM marks its line pointer as reusable. The page’s free space is updated in the Free Space Map (FSM) so future inserts can use it. The table file on disk does not shrink. The space is recycled inside the existing extents.

2. Freeze old transaction IDs.

The 32-bit xmin values wrap around every 4 billion transactions. VACUUM rewrites tuples older than vacuum_freeze_min_age so their xmin is replaced with a special “frozen” marker that is considered visible to every future snapshot. Without this, the cluster would eventually shut itself down to prevent data corruption (see the wraparound section).

3. Update the visibility map and statistics.

The visibility map tracks which pages are “all-visible” (no tuple needs visibility checking), enabling index-only scans. VACUUM updates this map and, when run as VACUUM ANALYZE, also refreshes the statistics the planner uses to cost queries.

The Three Variants

VACUUM table— the normal, concurrent version. Takes aShareUpdateExclusiveLockthat allows reads and writes. Does not return disk space to the OS.VACUUM FULL table— rewrites the entire table into a new file, eliminates all dead space, then swaps it in. Returns disk space to the OS. Takes anAccessExclusiveLock— blocks everything including reads — for the duration. On a 200 GB table this is a multi-hour outage. Almost never the right choice in production. Usepg_repackorpg_squeezeinstead for online equivalents.VACUUM FREEZE table— aggressively freezes every eligible tuple, not just those pastvacuum_freeze_min_age. Useful before a pg_upgrade or when you know wraparound is imminent, noisy otherwise.

Autovacuum

Nobody runs VACUUM by hand in a normal system. The autovacuum launcher spawns worker processes that pick tables to vacuum based on thresholds.

A table becomes eligible for autovacuum when:

dead_tuples > autovacuum_vacuum_threshold

+ autovacuum_vacuum_scale_factor * reltuplesThe defaults are threshold = 50, scale_factor = 0.2. That means a table with 10 million rows waits until it has 2 million dead tuples before being touched. On a hot OLTP table doing 10,000 updates per second, that is a mountain of bloat before autovacuum even starts, and by the time it finishes the next mountain is already building.

The cure is per-table tuning:

ALTER TABLE orders SET (

autovacuum_vacuum_scale_factor = 0.02, -- 2% instead of 20%

autovacuum_vacuum_threshold = 1000,

autovacuum_analyze_scale_factor = 0.01,

autovacuum_vacuum_cost_limit = 2000, -- let workers run faster

autovacuum_vacuum_cost_delay = 2 -- ms between I/O batches

);The cost-based delay is the other half of tuning. Autovacuum workers run with an I/O budget — cost_limit work units, then sleep cost_delay milliseconds. The defaults are deliberately conservative so vacuum does not starve foreground queries. On modern SSD-backed systems those defaults are often 10x too slow. A busy table can accumulate dead tuples faster than autovacuum can clean them at default settings, and it falls progressively further behind until you notice via bloat alarms.

A minimal autovacuum configuration for a write-heavy cluster on fast storage:

# postgresql.conf fragments — illustrative, not one-size-fits-all

autovacuum: on

autovacuum_max_workers: 6

autovacuum_naptime: 15s

autovacuum_vacuum_cost_delay: 2ms

autovacuum_vacuum_cost_limit: 2000

autovacuum_vacuum_scale_factor: 0.05

autovacuum_vacuum_insert_scale_factor: 0.10 # v13+

autovacuum_analyze_scale_factor: 0.02

maintenance_work_mem: 1GB # lets vacuum batch index cleanupIncrease maintenance_work_mem only if the machine has the RAM to spare — each autovacuum worker can use up to this amount. Setting 1GB with 6 workers and a 2 GB box is a recipe for OOM.

Transaction ID Wraparound

The 32-bit transaction counter wraps. When it does, a transaction ID from 2 billion transactions ago becomes “in the future” and its tuples become invisible. Your data would effectively disappear.

Postgres prevents this with the freeze mechanism described earlier. But if autovacuum falls far enough behind that tuples exist whose xmin is approaching wraparound distance, Postgres will:

- At

autovacuum_freeze_max_age(default 200 million), launch aggressive anti-wraparound autovacuums that cannot be cancelled. - At

vacuum_failsafe_age(default 1.6 billion), skip cost limits and index cleanup to freeze faster. - At about 3 million transactions before wraparound, refuse new transactions and log:

ERROR: database is not accepting commands to avoid wraparound data loss

You are now offline. Recovery is a single-user-mode VACUUM FREEZE, which on a large cluster takes hours to days.

Monitor this always:

SELECT datname,

age(datfrozenxid) AS xid_age,

2^31 - age(datfrozenxid) AS xids_remaining,

round(100.0 * age(datfrozenxid) / 2^31, 2) AS pct_used

FROM pg_database

ORDER BY age(datfrozenxid) DESC;If pct_used ever exceeds 50%, autovacuum is losing the race. Investigate immediately. Causes are almost always (a) a very long-lived transaction or replication slot holding back the xmin horizon, (b) autovacuum settings too conservative for the write rate, or (c) a stuck autovacuum worker hitting a lock it cannot acquire.

Indexes and Bloat

Index bloat is a separate beast. A VACUUM on the table does a pass over each of its indexes to remove entries pointing at removed heap tuples. But B-tree internal structure does not collapse on its own: once a leaf page is empty it can be reused, but pages above it retain their keys, and the tree never gets shorter.

After months of heavy churn, a B-tree on a 5 GB table can itself be 8 GB. The fix is to rebuild it:

REINDEX INDEX CONCURRENTLY orders_customer_id_idx;

REINDEX TABLE CONCURRENTLY orders; -- all indexes on the tableCONCURRENTLY (added in Postgres 12) builds a new index alongside the old one, swaps them atomically, and drops the old one — all without blocking writes. It takes roughly 2-3x longer than a plain REINDEX and uses extra disk, but it is safe to run in production. If a concurrent reindex fails partway, you are left with an invalid index that needs to be dropped explicitly before retrying.

As a rule of thumb, schedule a REINDEX CONCURRENTLY on your hottest tables once a quarter. Monitor with pgstatindex as shown earlier.

Fill Factor: When It Actually Helps HOT

Every table and index has a fillfactor — the percentage of a page Postgres fills during INSERT. The default is 100 for tables (fill completely) and 90 for B-trees (leave 10% slack for leaf growth).

Lowering a table’s fillfactor — say to 80 — leaves 20% of every page empty on insert. That matters because HOT updates require the new tuple to fit on the same page. With a page already 100% full, updates cannot be HOT and have to migrate to a new page (and update every index). With 20% spare, most updates stay in place.

ALTER TABLE sessions SET (fillfactor = 80);

-- Existing pages are unchanged; new pages use the new fill factor.

VACUUM FULL sessions; -- or pg_repack, to actually re-pack with the new fill factorOnly do this for tables that are update-heavy on non-indexed columns. On insert-only tables (event logs, immutable facts) a low fillfactor is pure waste. On update-heavy tables where every update changes an indexed column, it does not help either, because those updates cannot be HOT in the first place. The sweet spot is mutable rows whose index columns are stable — session heartbeats, counters, “last seen” timestamps that are not indexed.

Practical Diagnostics

A short list of statistics views that tell you what is actually happening:

-- Which tables are accumulating dead tuples fastest?

SELECT schemaname, relname,

n_live_tup, n_dead_tup,

round(100.0 * n_dead_tup / NULLIF(n_live_tup + n_dead_tup, 0), 1) AS dead_pct,

last_vacuum, last_autovacuum, autovacuum_count

FROM pg_stat_user_tables

WHERE n_dead_tup > 1000

ORDER BY dead_pct DESC NULLS LAST

LIMIT 20;

-- What is autovacuum working on right now?

SELECT pid, datname, relid::regclass AS table,

phase, heap_blks_total, heap_blks_scanned, heap_blks_vacuumed,

index_vacuum_count

FROM pg_stat_progress_vacuum;

-- Which sessions are holding the xmin horizon back?

SELECT pid, usename, state, backend_xmin,

now() - xact_start AS xact_age, query

FROM pg_stat_activity

WHERE backend_xmin IS NOT NULL

ORDER BY backend_xmin

LIMIT 10;

-- Replication slots can also hold xmin; check them too

SELECT slot_name, active, xmin, catalog_xmin,

pg_size_pretty(pg_wal_lsn_diff(pg_current_wal_lsn(), restart_lsn)) AS retained_wal

FROM pg_replication_slots;The pattern: high n_dead_tup with old last_autovacuum means autovacuum is starving. A query from the third block with a very old backend_xmin means somebody has forgotten to commit a transaction and is preventing cleanup cluster-wide. An inactive replication slot from the fourth block can pin the xmin horizon indefinitely and is a classic cause of runaway bloat.

A useful shell-level probe, run from psql:

psql -X -c "SELECT relname, pg_size_pretty(pg_total_relation_size(relid)) AS size

FROM pg_stat_user_tables

ORDER BY pg_total_relation_size(relid) DESC

LIMIT 10;"

psql -X -c "SELECT relname, pg_size_pretty(pg_indexes_size(relid)) AS idx

FROM pg_stat_user_tables

ORDER BY pg_indexes_size(relid) DESC

LIMIT 10;"If the index size rivals or exceeds the heap size on an old, churny table, you have index bloat waiting to be reindexed.

Closing Checklist

Before you walk away from a Postgres cluster you intend to run in production, verify:

- You know your HOT ratio on the top 10 write-heavy tables. Below 50% means an indexed column is being written too often — find it and decide whether the index pays for itself.

- Per-table autovacuum is configured on hot tables. Defaults are for a 1999 workload.

maintenance_work_memis high enough (256 MB minimum; 1 GB on larger boxes) for vacuum to clean indexes in a single pass.- Wraparound is monitored. Alert at 40% consumed, page at 60%.

- Long-running transactions are bounded. Set

idle_in_transaction_session_timeout. Monitorbackend_xminage. - Replication slots are healthy. An abandoned slot is a ticking bomb; alarm on inactive slots older than an hour.

- Quarterly REINDEX CONCURRENTLY on heavy-churn indexes. Measure with

pgstatindex, do not guess. - Fill factor 80-90 on update-heavy tables whose updates do not touch index columns. Default everywhere else.

VACUUM FULLis in your incident runbook, not your maintenance runbook. If you are reaching for it regularly, something upstream is wrong.

Postgres is, at its heart, a very principled database. Every behavior that surprises you — the growing files, the creeping query latency, the 3 AM autovacuum storm — follows directly from the MVCC model plus the storage format plus the maintenance loop. Once you can see the tuples, the xmin/xmax dance, and the ctid chains, the tuning knobs stop being arbitrary and start being obvious.

Further Reading

- PostgreSQL Documentation: Concurrency Control — the canonical description of MVCC and isolation levels. Short and worth reading end to end.

- PostgreSQL Documentation: Routine Database Maintenance Tasks — the official VACUUM and autovacuum chapter.

- The Internals of PostgreSQL — Hironobu Suzuki’s free online book. The chapters on Concurrency Control and VACUUM Processing are the best free deep dive available.

src/backend/access/heap/README.HOTin the Postgres source tree — a surprisingly readable design document for the HOT mechanism, written by the developers who built it.

Postgres rewards the operators who understand it. Spend an afternoon with pageinspect and pgstattuple on a staging copy of your production database. You will never read a query plan the same way again.