Monitoring vs Observability

These terms get used interchangeably, but the distinction matters. Monitoring is watching predefined metrics and alerting when they cross thresholds — CPU usage above 90%, error rate above 1%, response time above 500ms. It answers “is something wrong?”

Observability is the ability to understand your system’s internal state by examining its outputs. It answers “why is this specific request slow?” and “what changed between yesterday and today?” You need both, but observability is what lets you debug novel problems that your monitoring dashboards weren’t designed to catch.

The Three Pillars

Metrics

Metrics are numeric measurements over time: request count, error rate, latency percentiles, queue depth, CPU usage. They’re cheap to store, fast to query, and essential for alerting and dashboards.

I use the RED method for service metrics: Rate (requests per second), Errors (error rate), and Duration (latency distribution). For infrastructure, I use the USE method: Utilization, Saturation, and Errors. Between these two frameworks, you cover the critical health indicators for any system.

Instrument your application with four metric types: counters (always increasing — total requests, total errors), gauges (point-in-time values — active connections, queue size), histograms (distribution of values — request latency, payload size), and summaries (pre-calculated percentiles).

Logs

Logs are the narrative of your system — timestamped events describing what happened. The key to useful logs at scale is structure: JSON-formatted logs with consistent fields (timestamp, level, service, trace_id, message, and contextual metadata).

Avoid string concatenation in log messages. Instead of “User 123 placed order 456 for $78.90”, emit a structured log with fields: user_id=123, order_id=456, amount=78.90, event=order_placed. This lets you query logs by any field without parsing text.

Log levels matter at scale. DEBUG is for development only — never enable it in production unless actively investigating an issue. INFO captures normal business events. WARN captures recoverable anomalies. ERROR captures failures that need attention. Be disciplined about levels; a system that logs everything at INFO is as useless as one that logs nothing.

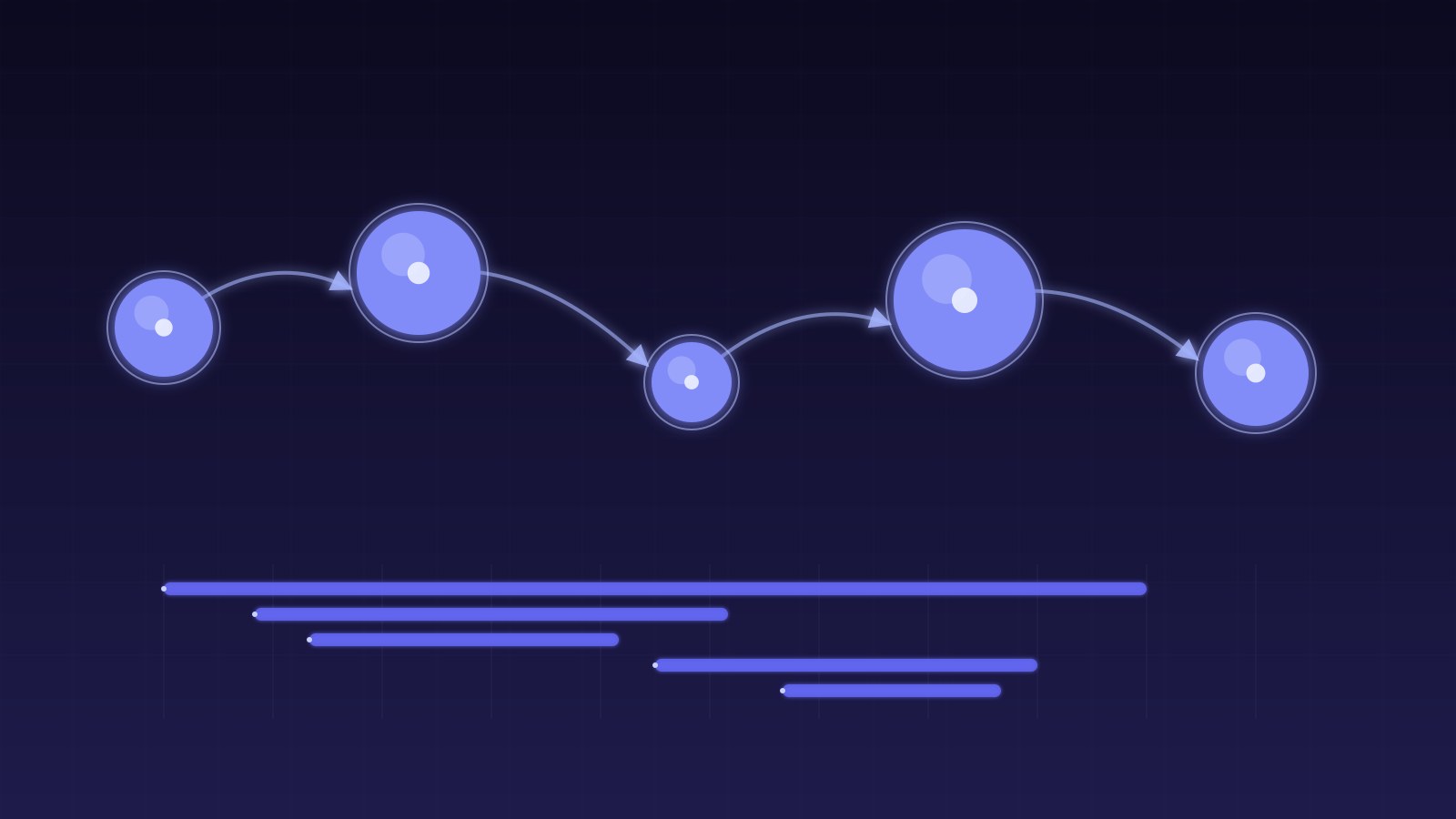

Traces

Distributed traces follow a request through your entire system — from the API gateway through microservices, message queues, and databases. Each service adds a span with timing, metadata, and status. The result is a complete timeline showing exactly where time was spent.

Traces are essential for debugging latency in distributed systems. When a request takes 3 seconds, the trace shows you that 50ms was in service A, 100ms was in service B, 2800ms was waiting for a database query in service C, and 50ms was serialization overhead. Without traces, you’re guessing.

Implement trace propagation by passing a trace ID through all service calls via headers. OpenTelemetry provides standardized libraries for this across languages.

Stack Recommendations

Metrics: Prometheus + Grafana

Prometheus is the industry standard for metrics collection. It scrapes endpoints, stores time-series data, and provides a powerful query language (PromQL). Grafana visualizes those metrics in dashboards.

For Kubernetes environments, kube-prometheus-stack gives you a complete monitoring setup: Prometheus, Grafana, AlertManager, and pre-built dashboards for cluster health, node metrics, and common workloads.

Logs: Loki or Elasticsearch

Grafana Loki is my current recommendation for most teams. It’s cheaper than Elasticsearch, integrates natively with Grafana, and uses label-based indexing that’s efficient for the query patterns most teams actually use.

Elasticsearch (via the ELK stack) is still relevant for teams that need full-text search across logs or have complex aggregation requirements. But for most use cases, Loki’s simpler operational model wins.

Traces: Jaeger or Tempo

Grafana Tempo integrates with the Grafana stack and supports the OpenTelemetry protocol natively. Jaeger is a mature alternative with a strong UI for trace exploration.

The choice depends on your existing stack: if you’re already running Grafana and Loki, Tempo is the natural fit. If you’re on a different stack, Jaeger’s standalone deployment is straightforward.

Alerting That Works

Alert Fatigue Is Real

The fastest way to make on-call miserable is alerting on everything. If your team gets 50 alerts per day, they’ll ignore all of them — including the critical ones. I’ve seen teams where “mute all alerts” was an unofficial policy because the signal-to-noise ratio was so bad.

Alert Design Principles

Alert on symptoms, not causes. Alert on “error rate above 1% for 5 minutes,” not “CPU above 80%.” High CPU might be perfectly fine during a batch job; high error rate is always a problem.

Every alert needs three things: a clear description of what’s wrong, a runbook link explaining how to investigate and remediate, and appropriate severity (page for customer-facing issues, ticket for everything else).

Use alert windows and thresholds that prevent flapping. A brief spike to 2% errors for 30 seconds might be a normal transient — alerting on it wastes attention. Use multi-window burn rate alerting: alert when the short-term burn rate (last 5 minutes) AND the long-term burn rate (last hour) both exceed thresholds.

Severity Levels

I use four severity levels: P1 (pages the on-call engineer immediately — service is down or data loss is occurring), P2 (pages during business hours — significant degradation affecting users), P3 (creates a ticket — non-urgent issue that needs attention within a few days), P4 (logged and reviewed weekly — optimization opportunities or cosmetic issues).

P1 alerts should fire less than once per week on average. If you’re getting P1 alerts daily, either your system is genuinely unreliable or your alert thresholds are wrong.

SLOs and Error Budgets

Service Level Objectives (SLOs) formalize your reliability targets. Instead of vague goals like “the service should be fast,” you define specific, measurable objectives: “99.9% of requests complete within 500ms over a 30-day window.”

Error budgets make SLOs actionable. If your SLO is 99.9% availability, you have a budget of 0.1% downtime per month (about 43 minutes). When the budget is healthy, you ship features aggressively. When it’s burning fast, you prioritize reliability work.

This framework turns reliability from a perpetual guilt trip into a data-driven decision: “We’ve used 60% of our error budget this month, so let’s hold off on risky deployments until next month” is a concrete, defensible decision.

Dashboard Design

The Four Dashboard Layers

Layer 1: Executive dashboard — one screen showing overall system health, SLO status, and key business metrics. Layer 2: Service dashboards — one per service, showing RED metrics, resource usage, and deployment markers. Layer 3: Investigation dashboards — detailed views for debugging specific issues (database performance, cache hit rates, queue depths). Layer 4: Infrastructure dashboards — node health, disk usage, network metrics.

Most engineers should live on Layer 2 and only drill into Layer 3 when investigating issues. If your team spends most of their time on infrastructure dashboards, something is wrong with your platform.

Getting Started

If you have no monitoring today, start with these three things: instrument your application with RED metrics (request rate, error rate, duration), set up structured logging with correlation IDs, and create one alert for each service’s error rate exceeding 1% for 5 minutes. This minimal setup catches the majority of production issues and gives you a foundation to build on.