Decouple Deploy from Release

A deploy puts code on a server. A release exposes behavior to a user. For most of software history these two events were the same thing: git push at 2pm on a Friday and hope. Feature flags split them apart. Once you can ship code dark and turn it on later, every downstream practice changes.

Main stays releasable at all times because unfinished work hides behind an off flag. Rollbacks stop meaning “revert the deploy” and start meaning “flip the flag” — which is seconds instead of minutes and doesn’t require a rebuild. A backend team can merge a half-finished endpoint on Monday and the frontend can wire it up on Thursday, both behind the same flag, with no long-lived branch between them.

The cost is real. Every flag is a branch in production. Untested branches rot. Flags that outlive their purpose become permanent if statements nobody dares delete. The rest of this post is about getting the benefits without drowning in the cost.

TL;DR

- Deploy puts code on a server; release exposes behavior — flags are how you split the two.

- Tag every flag as Release, Kill Switch, Experiment, or Permission — each gets different governance.

- Hide the vendor behind a

FlagClientinterface so you can swap providers without rewrites.- Ramp 1 → 5 → 25 → 100 with automated SLO checks and a louder-than-launch auto-rollback.

- Test each flag in isolation with a fake client that errors on undeclared keys — never

2^Nmatrices.- Drill kill switches quarterly; if median time-to-kill is over 90 seconds, fix the workflow.

- Track flag debt (count, age, stale, ownerless, 100%-for-14d) and automate cleanup tickets.

The Four Useful Flag Categories

Not every boolean in your codebase is the same kind of flag. Mixing them up is where most flag programs go wrong — the governance, the TTL, and the ownership model are different for each.

| Category | Lifespan | Owner | Dynamic? | Example |

|---|---|---|---|---|

| Release | Days to weeks | Feature team | Yes | ”new checkout flow” — on for 5%, ramping to 100% |

| Kill switch | Permanent | Platform / SRE | Yes | ”disable recommendations if provider is down” |

| Experiment | Weeks | Product / data | Yes | A/B test on onboarding copy |

| Permission | Permanent | Product | Yes | ”beta tier can access AI assistant” |

Release flags are temporary by definition. The other three are long-lived and need different rules. Lumping permissions under “feature flags” is technically true and operationally disastrous — permissions want audit logs, approval workflows, and stable contracts, while release flags want to be deleted the week after GA.

A healthy flag platform lets you tag each flag with its category and applies different hygiene rules accordingly. If your tool doesn’t, you’ll end up with a 200-flag dashboard where nobody remembers which ones are safe to remove.

Evaluation Models

Every flag platform eventually exposes the same four evaluation modes. The names differ, the mental model doesn’t.

Boolean. On or off globally. Trivially useful for kill switches, useless for rollouts.

Rollout percentage. On for N% of a hashed key (user ID, session ID, tenant ID). The hash matters: if you hash randomly per request, a user flickers between variants. If you hash on user ID, they see a consistent experience.

Rule-based. On if country == "TH", off otherwise. Simple attribute matching, usually authored in a web UI.

Targeting. On for users with plan == "pro" AND signup_date > 2026-01-01, ramping to 50% of the rest. Combines attributes with percentage rollout. This is what you actually want in production.

Server-side evaluation in TypeScript and Python:

// Node / Express with a thin provider interface

interface FlagClient {

isEnabled(key: string, ctx: EvalContext): Promise<boolean>;

getVariant<T>(key: string, ctx: EvalContext, fallback: T): Promise<T>;

}

interface EvalContext {

userId: string;

attributes: { plan?: string; country?: string; signupDate?: string };

}

app.get("/api/checkout", async (req, res) => {

const ctx: EvalContext = {

userId: req.user.id,

attributes: {

plan: req.user.plan,

country: req.user.country,

signupDate: req.user.signupDate,

},

};

if (await flags.isEnabled("checkout_v2", ctx)) {

return res.json(await checkoutV2(req));

}

return res.json(await checkoutV1(req));

});# FastAPI with the same provider interface

from typing import Protocol, TypeVar

T = TypeVar("T")

class FlagClient(Protocol):

async def is_enabled(self, key: str, ctx: "EvalContext") -> bool: ...

async def get_variant(self, key: str, ctx: "EvalContext", fallback: T) -> T: ...

@dataclass

class EvalContext:

user_id: str

attributes: dict[str, str]

@app.get("/api/checkout")

async def checkout(request: Request, flags: FlagClient = Depends(get_flags)):

user = request.state.user

ctx = EvalContext(

user_id=user.id,

attributes={

"plan": user.plan,

"country": user.country,

"signup_date": user.signup_date,

},

)

if await flags.is_enabled("checkout_v2", ctx):

return await checkout_v2(request)

return await checkout_v1(request)Two things to notice. First, the app depends on a FlagClient interface, not on a vendor SDK directly — swapping providers later is a wiring change, not a rewrite. Second, the evaluation context is explicit. Flag decisions should be a pure function of (flag key, context); anything else is unreproducible and untestable.

On the React side, the same discipline:

// A thin hook that subscribes to flag changes and avoids flicker

function useFlag(key: string, fallback: boolean): boolean {

const [value, setValue] = useState<boolean>(fallback);

const client = useFlagClient();

useEffect(() => {

let alive = true;

client.evaluate(key, fallback).then((v) => {

if (alive) setValue(v);

});

const unsub = client.onChange(key, (v) => alive && setValue(v));

return () => { alive = false; unsub(); };

}, [key]);

return value;

}

function CheckoutPage() {

const v2 = useFlag("checkout_v2", false);

return v2 ? <CheckoutV2 /> : <CheckoutV1 />;

}The fallback matters — if the flag service is unreachable, the component still renders something sensible. Flags should always degrade to a known-safe state, never crash.

Self-Hosted vs SaaS

There is no universally correct answer here. The trade-off depends on your compliance posture, team size, and how much infrastructure you already own.

| Platform | Model | Strengths | Trade-offs |

|---|---|---|---|

| Unleash | Self-hosted (OSS + paid tiers) | Mature targeting, good SDKs, runs in your VPC | You operate it; UI is utilitarian |

| GrowthBook | Self-hosted or SaaS | Strong experimentation + stats built in | Smaller ecosystem than LaunchDarkly |

| PostHog | Self-hosted or SaaS | Flags bundled with product analytics | Heavyweight if you only want flags |

| LaunchDarkly | SaaS | Polished UX, enterprise governance, broad SDK set | Price scales with MAU; your eval traffic leaves |

| ConfigCat | SaaS | Simple pricing, light SDKs, low operational burden | Fewer targeting primitives than heavier tools |

Self-hosted wins when data residency is non-negotiable, when you already run a Kubernetes cluster and a Postgres, or when your scale makes SaaS pricing absurd. SaaS wins when you don’t want a pager rotation for your flag service, when audit and SSO must work on day one, or when a small team wants to focus on product instead of operating yet another platform.

One underrated factor: evaluation latency. If your SDK evaluates locally against a streamed ruleset (Unleash, LaunchDarkly, ConfigCat), flag checks are microseconds. If it round-trips to an API on every check, you’ve added network hops to every request path. Read the SDK docs, not the marketing page.

A minimal Unleash-style config, for flavor:

# unleash-config.yaml

features:

- name: checkout_v2

description: New checkout flow, gradual rollout

type: release

enabled: true

strategies:

- name: gradualRolloutUserId

parameters:

percentage: "25"

groupId: checkout_v2

- name: userWithId

parameters:

userIds: "qa-lead,cto,product-lead"

- name: recommendations_kill

description: Disable recommendations if vendor SLA breaches

type: kill-switch

enabled: falseGrowthBook ships similar YAML/JSON, with extra fields for experiment assignment and statistical analysis.

Flag Lifecycle

A flag is a live wire in production. Treat it like one.

Naming. <area>_<verb>_<object> beats marketing names. checkout_enable_v2 survives a product rebrand; project_falcon doesn’t. Prefix by category if your tool can’t tag: rel_, kill_, exp_, perm_.

Ownership. Every flag has exactly one owner — a team, ideally with an on-call rotation. Orphaned flags are the source of most flag debt. If the owner leaves and nobody claims it, the flag goes on a short-TTL cleanup list.

TTL. Release flags get a date, written in the flag description. Six weeks is a good default. When the date passes, the platform either auto-creates a cleanup ticket or blocks the flag from being modified until someone extends the TTL with a reason.

Cleanup tickets. Wire your flag platform’s webhook into your issue tracker. “Flag checkout_enable_v2 reached 100% rollout 14 days ago” should create a Jira or Linear ticket automatically, assigned to the owner, linking to every call site. Making cleanup a normal scheduled task — not a heroic quarterly project — is the single biggest lever against flag debt.

Testing With Flags Without Combinatorial Explosion

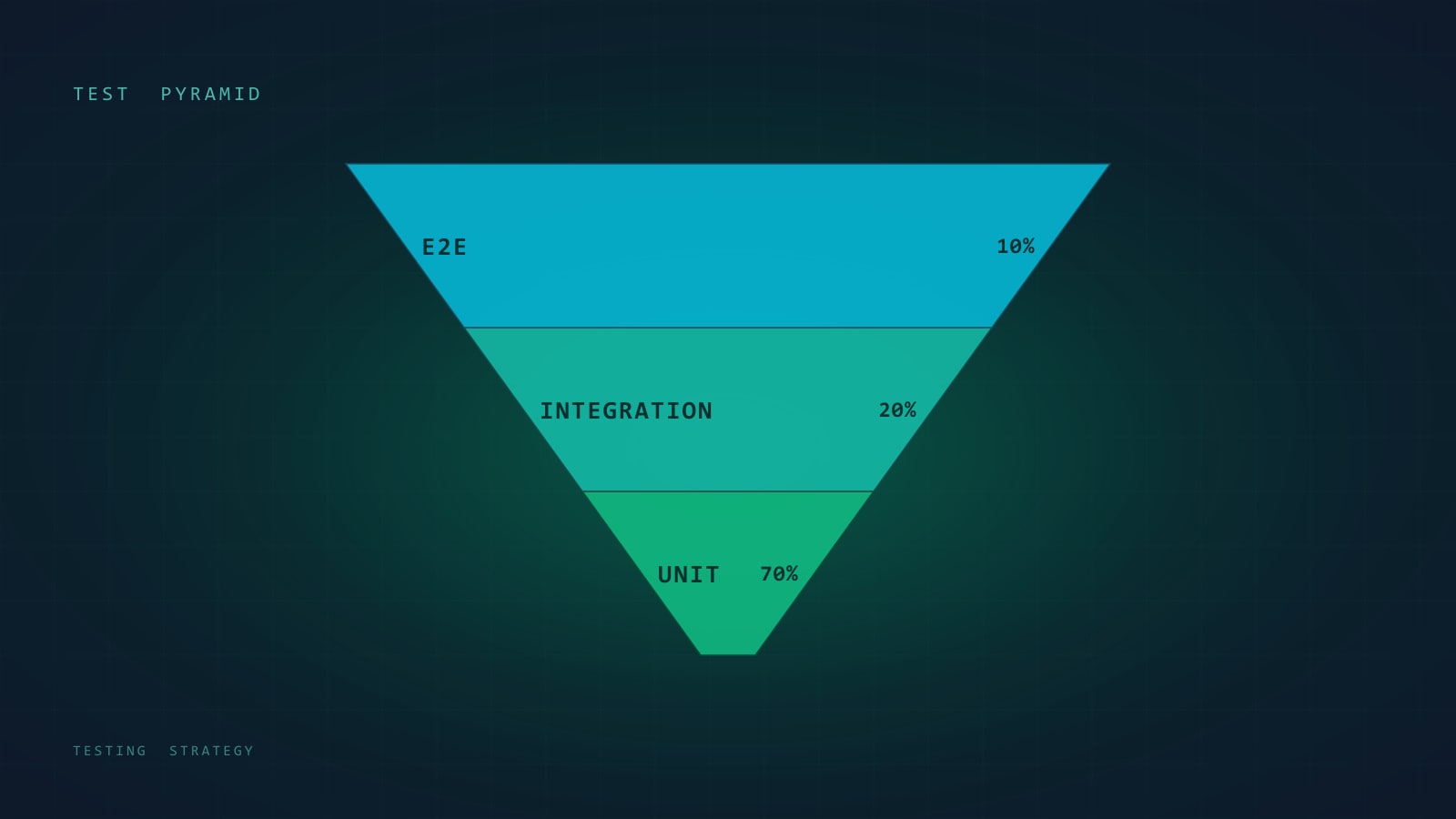

Ten flags means 1024 combinations. Nobody tests that. The trick is to not treat flags as free variables.

Test each flag state in isolation. For every flag, have tests that run with it on and with it off, against the code paths that branch on it. That’s 2 × N tests, not 2^N.

Use default matrices. Define the canonical on/off combinations you actually ship to users: “everything at rollout default” for a PR build, “next release candidate” for staging. Two or three matrices cover 95% of real-world states.

Fail closed in CI. Unit tests should use an in-memory fake flag client that requires every flag it’s asked about to be explicitly declared. An undeclared flag throws — this catches code reading flags the tests forgot to set up.

class FakeFlagClient implements FlagClient {

constructor(private values: Record<string, boolean>) {}

async isEnabled(key: string): Promise<boolean> {

if (!(key in this.values)) {

throw new Error(`Flag "${key}" not declared in test fixture`);

}

return this.values[key];

}

}

// Test:

it("uses checkout v2 when flag is on", async () => {

const flags = new FakeFlagClient({ checkout_v2: true });

const res = await handleCheckout(req, flags);

expect(res.version).toBe(2);

});class FakeFlagClient:

def __init__(self, values: dict[str, bool]):

self.values = values

async def is_enabled(self, key: str, ctx) -> bool:

if key not in self.values:

raise RuntimeError(f'Flag "{key}" not declared in test fixture')

return self.values[key]

@pytest.mark.asyncio

async def test_checkout_uses_v2_when_flag_on():

flags = FakeFlagClient({"checkout_v2": True})

res = await handle_checkout(req, flags)

assert res.version == 2This small discipline catches the single most common flag bug: shipping a code path that reads a flag you never registered.

Rollout Patterns

Knowing how to ramp is as important as whether to ramp.

Linear ramp. Start at 1%, double every few hours if metrics stay healthy: 1 → 2 → 5 → 10 → 25 → 50 → 100. Slow enough to catch regressions, fast enough to finish in a work week.

Blue/green. Two full environments, flip traffic at the load balancer. Good for database-heavy changes where a flag inside the app is too fine-grained. Bad for feature iteration because you can’t ramp per-user.

Ring deployments. Deploy to rings of increasing trust: ring 0 is you, ring 1 is employees, ring 2 is beta customers, ring 3 is everyone. Microsoft popularized this model for Windows and it maps cleanly to flags: each ring is a targeting rule.

Canary with auto-rollback. The canary serves 1–5% of traffic. A sidecar watches SLOs (error rate, p95 latency, business KPIs like conversion). If any SLO breaches for N minutes, the flag flips off automatically. This is the pattern you want for anything user-visible and high-traffic.

A minimal auto-rollback loop:

async function watchCanary(flagKey: string, slos: SLO[]) {

for (;;) {

const window = await metrics.query(slos, { since: "5m" });

const breached = slos.filter((s) => window[s.name] > s.threshold);

if (breached.length > 0) {

await flags.disable(flagKey, {

reason: `SLO breach: ${breached.map((s) => s.name).join(", ")}`,

});

await alert.page(`Auto-rolled back ${flagKey}`);

return;

}

await sleep(60_000);

}

}The auto-rollback has to be louder than a successful rollout. If nobody notices the flag flipped off, you’ll fix the same regression three times.

The Dark-Launch Pattern

For performance-sensitive changes — a rewritten query planner, a new caching layer, a reimplemented ranking algorithm — you don’t always want users to see the new result. You want to know if it would have been correct and fast, without the risk of shipping it.

Dark launch: compute both the old and new result, return the old, and record the diff.

async function getRecommendations(userId: string): Promise<Rec[]> {

const oldResult = await oldRecommender(userId);

if (await flags.isEnabled("rec_v2_dark_launch", { userId, attributes: {} })) {

// Compute in the shadow; don't block the response.

setImmediate(async () => {

const t0 = performance.now();

try {

const newResult = await newRecommender(userId);

metrics.histogram("rec_v2.latency_ms", performance.now() - t0);

metrics.diff("rec_v2.divergence", oldResult, newResult);

} catch (err) {

metrics.counter("rec_v2.errors", { type: err.name });

}

});

}

return oldResult;

}async def get_recommendations(user_id: str) -> list[Rec]:

old_result = await old_recommender(user_id)

if await flags.is_enabled("rec_v2_dark_launch", EvalContext(user_id, {})):

# Fire and forget; don't block the response.

asyncio.create_task(_shadow_eval(user_id, old_result))

return old_result

async def _shadow_eval(user_id: str, old_result: list[Rec]) -> None:

t0 = time.perf_counter()

try:

new_result = await new_recommender(user_id)

metrics.histogram("rec_v2.latency_ms", (time.perf_counter() - t0) * 1000)

metrics.diff("rec_v2.divergence", old_result, new_result)

except Exception as err:

metrics.counter("rec_v2.errors", type=type(err).__name__)After a week of real traffic you know the new system’s p99, error rate, and divergence from the old one. Ramping to live traffic is then a boring decision instead of a gamble.

Client-Side vs Server-Side Evaluation

Where the flag decision runs changes what you can do with it.

Server-side hides the flag’s existence from the client. Good for backend-only changes, good for A/B tests where you don’t want users inspecting network traffic to learn about unreleased features, good for privacy (attributes never leave your infrastructure). Cost: every flag check is an in-process evaluation on your servers.

Client-side lets the browser decide. Good for UI-only changes where server involvement is overkill. Bad for latency (the first evaluation can flicker — old UI, then new UI), bad for privacy (the SDK sends attributes to the vendor), and easy to bypass (DevTools can flip any flag). For authorization, client-side flags are theater — enforce on the server or don’t bother.

Flicker mitigations. Server-render the initial flag state and hydrate with it. If you must evaluate in-browser, block render with a short timeout and a sensible fallback, not a spinner. Users notice 300ms of layout thrash more than 300ms of nothing.

Emergency Drills — Is Your Kill Switch Actually Useful at 3am?

A kill switch that nobody has ever used is a kill switch that doesn’t work. You find out the wrong way: the recommender service is melting down, the on-call engineer pulls up the flag dashboard for the first time at 3:12am, the SSO session expired, the flag key isn’t what they expected, and the disable button requires a second reviewer who is asleep.

Drill the kill switch the same way you drill database failover. Quarterly, in a scheduled window:

- On-call engineer gets paged with “disable

recommendations_kill.” - They do it without help. Time how long it takes.

- Verify traffic drops on the dependent service.

- Re-enable, post the timing to the team channel.

If the median time-to-kill is more than 90 seconds, you have a usability bug in your incident response. Common causes: flag dashboard behind an SSO provider that rotates tokens, kill switches named ambiguously (enable_recs vs disable_recs — which is “on” in an outage?), required approval workflows that have no bypass for high-severity incidents.

Name kill switches affirmatively: kill_recommendations is unambiguous. “Flip to true to kill” should be written in the flag description in plain English. At 3am nobody reads source code.

Flag Debt — Metrics, Audits, Enforcement

Flag debt is the invisible cost that eats flag programs alive. Measure it or it will grow without bound.

Metrics to track.

- Count of active flags per category. Release should trend toward zero at steady state.

- Age distribution. Release flags older than 60 days are a smell. Older than 180 days, almost always dead.

- Stale flags — not evaluated in N days. Your SDK telemetry tells you this.

- Flags with no owner — should always be zero.

- Flags at 100% rollout for >14 days. These are shipping code; the flag is ceremonial.

Audits. Monthly, a platform engineer (or a scheduled job) reviews the top N offenders and pings owners. Keep the meeting short — five minutes, names and numbers, no slide deck.

Enforcement. Make flag creation cheap and flag retirement automatic. Some teams gate new-flag creation on closing an old one when they’re over a quota. Others run a linter that fails CI when a file references a flag the platform says has been at 100% for 14 days. Both work; pick one and stick with it.

The point isn’t zero flags. It’s that every live flag has a reason you can articulate in one sentence.

Closing Checklist

Before you declare a progressive delivery program “done”:

- Every flag has a category, owner, TTL, and a one-sentence description.

- The flag evaluator is behind an interface — you could swap vendors in a week.

- Unit tests use a fake client that errors on undeclared flags.

- Rollouts ramp through 1 → 5 → 25 → 100 with automated SLO checks.

- At least one kill switch has been exercised in a drill, not just in theory.

- Flag-debt metrics are on a dashboard someone actually looks at.

- A monthly cleanup job is scheduled and ticketed, not reliant on willpower.

- Client-side flags are never trusted for authorization.

Progressive delivery doesn’t make shipping safer by itself. It gives you the levers; the safety comes from the discipline around them. Get the naming, ownership, and cleanup right and flags become one of the most useful tools in the production toolbox. Get them wrong and you ship a thousand branches of code nobody understands.

Further Reading

- Accelerate — Forsgren, Humble, Kim (2018). The research behind why decoupling deploy from release correlates with high-performing teams.

- Feature Flag Best Practices — Pete Hodgson’s O’Reilly report. The short version of everything above.

- Release It! — Michael Nygard (2018). Kill switches and stability patterns in the broader context of production systems.

Flags are cheap to add and expensive to own. Budget accordingly.