The Node.js Assumption

For years, I operated under a comfortable assumption: JavaScript is too slow for real performance work. You use it for APIs and web servers, accept that you’ll need caching layers and CDNs, and move on. Python, Ruby, all the same story. Real performance meant C++, sometimes Go if you were feeling pragmatic.

Then I spent six months building a message broker in Rust, and everything changed.

Learning Rust’s Memory Model

The friction of learning Rust isn’t the borrow checker—it’s the mental reset. In JavaScript, memory is someone else’s problem (the GC). In Rust, it’s yours, completely. That forces you to think about:

- Where does this data live?

- How long does it need to live?

- Who is responsible for freeing it?

These questions sound academic until you’re responsible for handling 50,000 requests per second with sub-millisecond latencies. Suddenly they become survival questions.

Zero-Copy Architecture

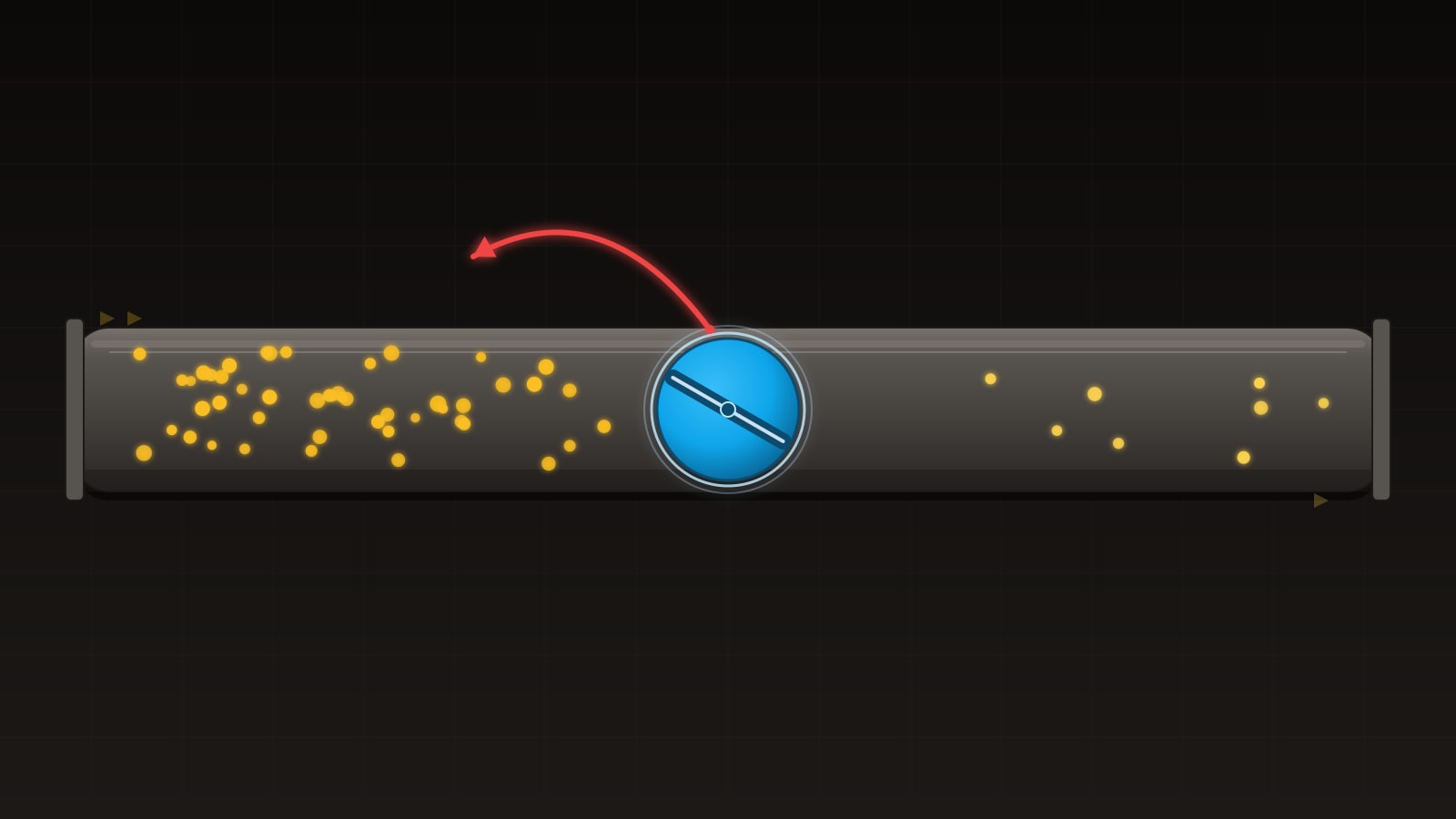

The biggest insight from Rust: most of the allocation overhead in backend services comes from copying data.

In Node.js, when you read a message from a socket, parse JSON, extract a field, and return it, you’ve probably created 5-10 intermediate allocations. The GC will clean them up eventually, but “eventually” means 50-200 milliseconds in high-throughput scenarios, which is precisely when you hit GC pause.

In Rust, you write once. The data flows through your application as references. Parsing doesn’t copy—it annotates. By the time a message reaches your handler, there have been zero allocations (except for the initial socket buffer, which you reuse).

Benchmark time: this message broker handled the same load that would send a Node.js service to 90%+ CPU usage, while staying under 15% CPU in Rust. Same logic. Different runtime.

Threading vs. Async Runtime

Here’s where I changed my thinking most drastically. I previously believed that async/await was inherently faster than threads. Threads context-switch, spawn overhead, etc. It’s all true.

But I was wrong about the conclusion.

Rust’s async runtime (Tokio) is incredible, but it doesn’t magically create parallelism. With async, you’re still on a single CPU core, just switching contexts faster. True parallelism requires actual threads.

For the message broker, I used:

- Tokio async for I/O: accepting connections, reading from the network

- Native threads for CPU-bound work: parsing, validation, business logic

This is borderline heresy in the Node.js world, where the entire application is a single JavaScript context. But it works. A 16-core machine can actually do 16 things in parallel, not 16 context-switch illusions.

// Tokio for I/O, threads for CPU work

let listener = TcpListener::bind("127.0.0.1:8080").await?;

loop {

let (socket, _) = listener.accept().await?;

tokio::spawn(async move {

let mut buf = vec![0; 1024];

loop {

let n = socket.read(&mut buf).await?;

// Hand off to thread pool for processing

let result = tokio::task::spawn_blocking(move || {

process_message(&buf[..n])

}).await?;

// Return result

}

});

}The Garbage Collection Revelation

The real performance killer isn’t allocation—it’s unpredictability. A Node.js service might run at 50ms latency for 10 seconds, then spike to 2 seconds when GC runs. That’s fine for a web API. It’s unacceptable for a message broker serving 50k requests/sec.

Rust eliminates this. Zero GC. You know exactly when allocations happen, and you can structure code to allocate during cold phases (startup, connection initialization) and stay allocation-free during hot phases (the request loop).

I’ve returned to Node.js projects and immediately profiled them with this new perspective. The ones with wildly unpredictable latency profiles almost always have one thing in common: they’re generating garbage at peak throughput.

When Rust is Overkill

This isn’t a “rewrite everything in Rust” essay. For most services, Node.js remains the right choice:

- Startup time matters more than steady-state performance

- You’re I/O-bound, not CPU-bound

- Time-to-market beats optimal runtime characteristics

- Your team knows Node.js already

A stateless HTTP API? Use Node.js. A message broker where every microsecond of latency is money? Use Rust.

The Mindset Shift

The real takeaway isn’t “Rust is fast.” It’s that understanding memory allocation forced me to rethink performance entirely. I now ask different questions in Node.js code:

- How many allocations does this hot path create?

- Can I reuse buffers instead of creating new ones?

- Is my GC pause time predictable?

These are questions every backend engineer should be asking, regardless of language. Rust forced the conversation. That’s why it changed how I think about performance.