Retrieval-Augmented Generation looks trivial in a notebook and brutal in production. The gap between the two is filled with messy PDFs, ambiguous queries, silent hallucinations, and cost spikes nobody forecast. This post is the field guide I wish I had the first time a RAG demo met a real corpus.

TL;DR

- Retrieval quality is the ceiling of RAG quality — the model can only answer from context it actually receives.

- Split the system into an offline indexing pipeline and an online query pipeline; do not collapse them.

- Spend disproportionate time on ingestion: HTML chrome, PDF OCR, tables, and near-duplicates all sabotage retrieval.

- Use document-structure-aware chunking where structure exists; fall back to recursive splitting with overlap.

- Default to hybrid search (BM25 + vector, fused with RRF), then add a cross-encoder reranker when latency allows.

- Force citations in the prompt and reject answers whose claims have no source — this kills a whole class of hallucinations.

- Build a small gold eval set early, measure context recall / answer relevance / faithfulness, and watch production traffic.

Why Most RAG Demos Don’t Survive Real Data

Every LLM demo follows the same arc. You load a folder of clean markdown, split it on every 1,000 characters, embed with text-embedding-3-small, stuff the top-5 chunks into a prompt, and the model answers perfectly on the three questions you rehearsed.

Then you ship it. The real corpus shows up: scanned PDFs with rotated pages, multi-column layouts, tables that matter, code blocks that mustn’t be broken, HTML dumps with navigation chrome, duplicate documents from three different CMS exports, and questions like “what did we decide about vendor X in Q3?” that no cosine similarity will ever find.

This post is about what sits between the demo and a RAG system that survives production. I assume you’ve already built the demo. If you haven’t, build it first — the failure modes below only make sense once you’ve felt the pull of the naive happy path.

The theme throughout: retrieval quality is the ceiling of RAG quality. The smartest model in the world cannot answer from context it never received. Every section below is ultimately about feeding the model better context.

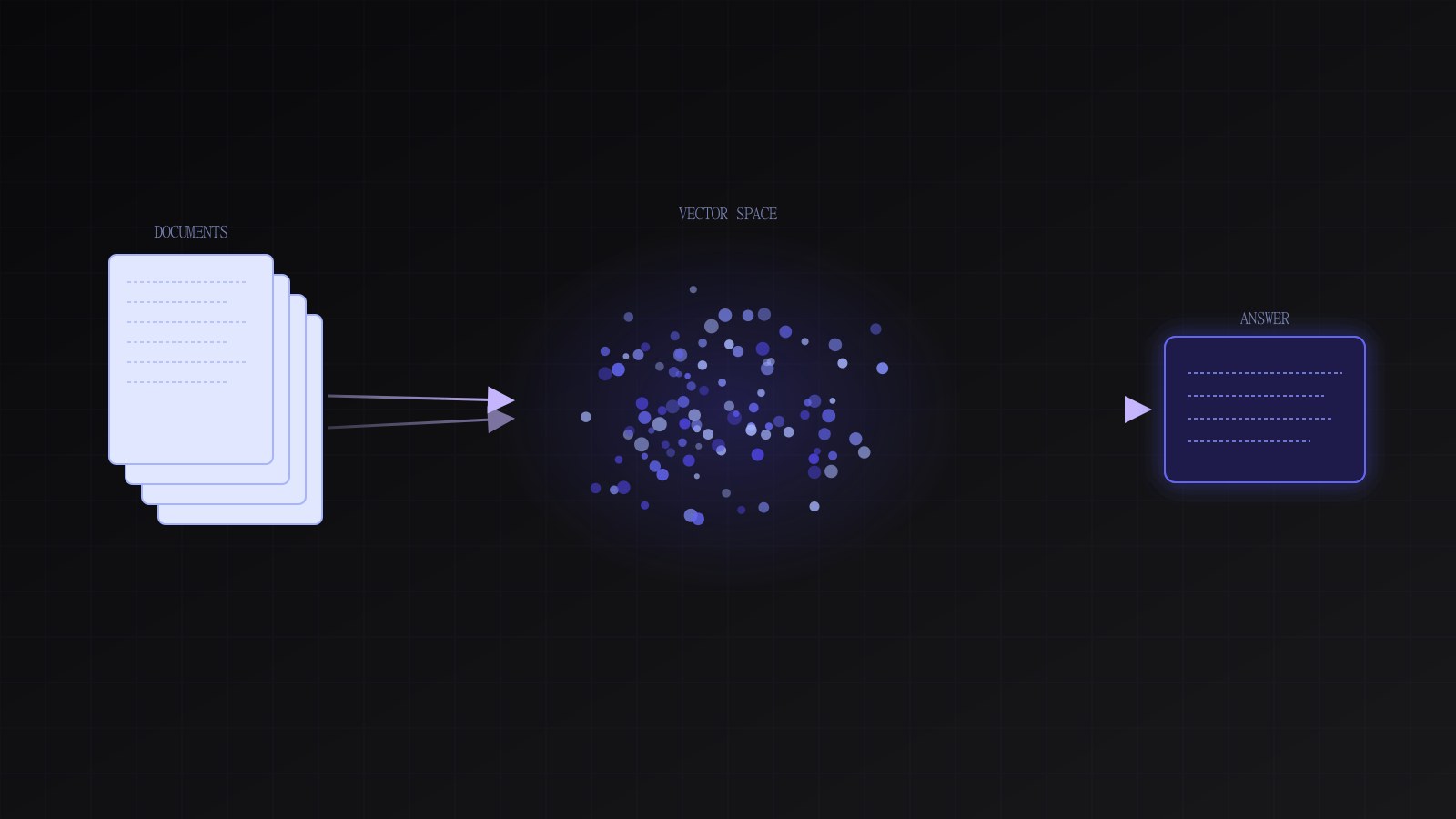

The Pipeline at a Glance

A production RAG system is not one pipeline. It’s two: an offline indexing pipeline that processes source documents, and an online query pipeline that serves requests. They share an index, and nothing else.

flowchart LR

subgraph Offline[Indexing pipeline]

A[Source docs] --> B[Parse / OCR]

B --> C[Chunk]

C --> D[Embed]

D --> E[(Vector + BM25 index)]

end

subgraph Online[Query pipeline]

Q[User query] --> R[Retrieve<br/>BM25 + vector]

R --> F[Fuse RRF]

F --> RR[Rerank<br/>cross-encoder]

RR --> P[Prompt with citations]

P --> L[LLM]

L --> ANS[Answer + sources]

ANS --> EV[Eval / telemetry]

end

E --> RMost teams collapse the two into one script and regret it. Keep them separate. The indexing pipeline is a batch job that can take hours; the query pipeline must answer in hundreds of milliseconds.

Ingestion: The Unglamorous 40%

You will spend more time on parsing than on prompts, embeddings, or vector DBs combined. This is not a failure of your architecture — it’s the nature of real documents.

HTML

The first mistake is using BeautifulSoup.get_text() and shipping. Real HTML contains navigation, cookie banners, footers, “related posts,” ad slots, and <script> tags that survive naive extraction. They pollute your index with near-duplicate boilerplate that crushes retrieval precision — “privacy policy” will outrank your actual content.

Use a reader-mode extractor (trafilatura, readability-lxml, or Mozilla’s readability) before anything else. These isolate the main content block, strip chrome, and preserve structure.

import trafilatura

def extract_html(url_or_html: str) -> str | None:

return trafilatura.extract(

url_or_html,

include_tables=True,

include_links=False,

include_comments=False,

favor_precision=True,

)PDFs are the hardest format in the corpus. They come in three flavors, and you must detect which one you have:

- Born-digital text PDFs — text layer is intact.

pypdforpdfplumberworks, but column order is often wrong. - Scanned PDFs — the “text” is pixels. You need OCR (

tesseract,paddleocr, or a cloud vision API). - Hybrid — text layer exists but was produced by bad OCR at creation time, so you get

rninstead ofmand similar rot.

A useful heuristic: if len(extracted_text) / num_pages < 100, assume it’s scanned or corrupt and fall back to OCR. For layout-aware extraction (tables, multi-column, figures), docling, unstructured, or marker give dramatically better results than raw PDF libraries — at the cost of much higher compute per page.

from pathlib import Path

import pypdf

from unstructured.partition.pdf import partition_pdf

def parse_pdf(path: Path) -> list[dict]:

# Fast path: try text extraction first

reader = pypdf.PdfReader(path)

raw = "\n".join(p.extract_text() or "" for p in reader.pages)

if len(raw) / max(len(reader.pages), 1) < 100:

# Likely scanned — fall back to layout-aware parser with OCR

elements = partition_pdf(

filename=str(path),

strategy="hi_res",

infer_table_structure=True,

languages=["eng"],

)

return [{"type": e.category, "text": e.text, "page": e.metadata.page_number}

for e in elements if e.text]

return [{"type": "NarrativeText", "text": raw, "page": None}]Tables

Tables are where most RAG systems silently lose accuracy. A 2D structure serialized as whitespace-separated text is nearly useless to an embedding model. Two options:

- Serialize as markdown tables, which LLMs read natively. Good for small tables.

- Serialize each row as a sentence (“In 2024, revenue was $12M and headcount was 82.”), then embed each row separately. Better for large tables you need to retrieve on.

Code blocks

If your corpus contains code — docs, runbooks, internal wikis — preserve fence markers and language tags through every transformation. Code split across chunks is worse than useless; the model will hallucinate the missing half.

Duplicates

Near-duplicate detection matters more than people expect. Multi-export CMS migrations routinely produce the same article three times with whitespace differences. MinHash (via datasketch) on normalized text catches 95% of this cheaply; a second pass on exact-hash of normalized content catches the rest.

Chunking: No Free Lunch

Chunking is a joint optimization problem over embedding quality, retrieval recall, and prompt budget. Every strategy trades one for another.

Fixed-size token chunks

Easiest, and usually wrong. Splits sentences, tables, and code.

Recursive character splitting

The de facto default. Split on paragraph breaks first, then sentence breaks, then words — only fall through to hard character splits if nothing else works. Use an overlap of 10–20% to preserve cross-boundary context.

def recursive_split(

text: str,

chunk_size: int = 800,

overlap: int = 120,

separators: tuple[str, ...] = ("\n\n", "\n", ". ", " ", ""),

) -> list[str]:

def split(text: str, seps: tuple[str, ...]) -> list[str]:

if len(text) <= chunk_size or not seps:

return [text]

sep, rest = seps[0], seps[1:]

parts = text.split(sep) if sep else list(text)

chunks, buf = [], ""

for p in parts:

candidate = buf + (sep if buf else "") + p

if len(candidate) <= chunk_size:

buf = candidate

else:

if buf:

chunks.append(buf)

buf = p if len(p) <= chunk_size else ""

if len(p) > chunk_size:

chunks.extend(split(p, rest))

if buf:

chunks.append(buf)

return chunks

raw = split(text, separators)

# Apply overlap by prepending the tail of the previous chunk

out: list[str] = []

prev_tail = ""

for c in raw:

out.append((prev_tail + c)[-chunk_size - overlap:])

prev_tail = c[-overlap:] if overlap else ""

return outSemantic chunking

Embed each sentence, measure cosine distance between neighbors, split at the high-distance boundaries. Produces chunks that respect topic shifts instead of paragraph breaks. Worth it for long-form content (papers, book chapters) where paragraphs are artificial.

Cost: you embed the whole corpus twice (once for chunking, once for indexing).

Document-structure-aware chunking

For anything with headings — docs, runbooks, legal — split by heading hierarchy and keep the heading trail as metadata. Every chunk knows which section it belongs to, which means you can filter (“only chunks from the Security section”) and you can show users real breadcrumbs.

from dataclasses import dataclass, field

@dataclass

class Chunk:

text: str

doc_id: str

heading_trail: list[str] = field(default_factory=list)

page: int | None = None

token_count: int = 0

def chunk_by_headings(doc_id: str, markdown: str, max_tokens: int = 400) -> list[Chunk]:

chunks: list[Chunk] = []

trail: list[str] = []

buf: list[str] = []

def flush():

if not buf:

return

text = "\n".join(buf).strip()

if text:

chunks.append(Chunk(text=text, doc_id=doc_id, heading_trail=list(trail)))

buf.clear()

for line in markdown.splitlines():

if line.startswith("#"):

flush()

level = len(line) - len(line.lstrip("#"))

title = line.lstrip("# ").strip()

trail = trail[: level - 1] + [title]

else:

buf.append(line)

if sum(len(x) for x in buf) > max_tokens * 4: # rough char→token

flush()

flush()

return chunksRule of thumb: start with document-structure-aware chunking where structure exists, recursive splitting as a fallback, and semantic chunking only when you’ve measured that the other two aren’t enough.

Embeddings: Pick Two of Three

You are trading off across three axes: quality, dimensionality (storage + retrieval cost), and price/latency. There is no model that wins on all three, and rankings shift every quarter, so treat this section as a framework rather than a verdict.

The current realistic shortlist:

- OpenAI

text-embedding-3-large/-small— strong general English, simple API, the-smallvariant is very cheap. Supports dimension truncation (Matryoshka) so you can trade recall for storage without re-embedding. - Cohere

embed-v3— competitive quality, multilingual variants that genuinely work on non-English corpora. bge-*(BAAI general embedding) — open-weight, self-hostable, strong on English benchmarks. Good when data cannot leave your network.gte-*(Alibaba) — similar niche to bge, occasionally stronger on certain domains.

Whichever you pick, evaluate on your own data — published benchmark rankings never match a specific corpus. Build a small gold set (next section) and run each candidate against it.

Dimensions vs accuracy

Higher-dimensional embeddings carry more signal and retrieve more accurately, but they cost more to store, index, and compare. For most apps, 768–1024 dimensions is the sweet spot. Models that support Matryoshka representations (text-embedding-3-*, some bge variants) let you store the full vector and truncate at query time.

When to fine-tune

Almost never, and later than you think. In order of cost-effectiveness:

- Better chunking and metadata — fixes most “retrieval is bad” problems.

- Hybrid search + reranker — fixes most of what’s left.

- Instruction-tuned prompts at embedding time — some models (bge, e5) accept a task prefix that boosts retrieval. Free win if your model supports it.

- Fine-tune the embedding model — only if you have thousands of domain query/document pairs and have exhausted the above.

Batching and backoff

Embedding APIs throttle aggressively and fail transiently. Batch to the provider’s max, retry with exponential backoff, and persist embeddings keyed by a content hash so re-runs don’t repay the bill.

import hashlib, time

from openai import OpenAI, RateLimitError

client = OpenAI()

def content_hash(text: str, model: str) -> str:

return hashlib.sha256(f"{model}\x00{text}".encode()).hexdigest()

def embed_batch(

texts: list[str],

model: str = "text-embedding-3-small",

batch_size: int = 128,

max_retries: int = 6,

) -> list[list[float]]:

out: list[list[float]] = []

for i in range(0, len(texts), batch_size):

batch = texts[i : i + batch_size]

for attempt in range(max_retries):

try:

resp = client.embeddings.create(model=model, input=batch)

out.extend(d.embedding for d in resp.data)

break

except RateLimitError:

time.sleep(min(60, 2 ** attempt))

else:

raise RuntimeError(f"embedding failed after {max_retries} retries")

return outVector DB: The Honest Trade-offs

There is no universally best vector database. Pick based on operational fit, not benchmark charts.

- pgvector — if you already run Postgres, start here. One system to operate, transactional with your relational data, filters are real SQL. The

HNSWindex is production-ready. Weaknesses: multi-tenant at scale needs care, no native BM25 (usetsvectorseparately). - Qdrant — excellent filter performance, native hybrid search, good Rust-level throughput, straightforward self-host. Weak spot historically was metadata-heavy multi-tenant loads; recent versions fixed most of it.

- Weaviate — schema-first, built-in modules for hybrid and multi-modal. More opinionated; more features to learn.

- Pinecone — serverless, zero ops, very fast to get started, costs can surprise at scale, cannot be self-hosted.

- Chroma — great for prototypes and local dev. I would not run it as the durable store of a production system.

- Elasticsearch / OpenSearch — underrated for hybrid. You get mature BM25, filters, aggregations, and kNN in one system. Heavy to operate if you don’t already run it.

My default stack, if nothing else constrains the choice: Postgres with pgvector for the vectors and a companion tsvector full-text index for BM25. One system, transactional updates, real SQL filters. You can outgrow it, but you’ll know when.

CREATE EXTENSION IF NOT EXISTS vector;

CREATE TABLE chunks (

id BIGSERIAL PRIMARY KEY,

doc_id TEXT NOT NULL,

text TEXT NOT NULL,

heading_trail TEXT[] NOT NULL DEFAULT '{}',

metadata JSONB NOT NULL DEFAULT '{}',

content_hash TEXT NOT NULL,

embedding VECTOR(1536),

tsv TSVECTOR

GENERATED ALWAYS AS (to_tsvector('english', text)) STORED,

created_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

CREATE INDEX chunks_embedding_hnsw

ON chunks USING hnsw (embedding vector_cosine_ops)

WITH (m = 16, ef_construction = 64);

CREATE INDEX chunks_tsv_gin ON chunks USING GIN (tsv);

CREATE INDEX chunks_doc_id ON chunks (doc_id);

CREATE INDEX chunks_metadata_gin ON chunks USING GIN (metadata);Hybrid Search: Why Pure Vector Loses

Vector search captures semantic similarity. That is exactly what you want for “how do I cancel my subscription” matching a doc titled “Ending your plan.” It is exactly what you don’t want when a user pastes an error code, a SKU, a person’s name, or any other token where the literal match is the signal.

Vector embeddings smooth out rare tokens because that’s what they’re trained to do. A user who searches for ERR_CERT_AUTHORITY_INVALID wants the document that contains that exact string, not the document about “certificate validity problems in general.”

The fix is hybrid search: run BM25 and vector retrieval in parallel, then fuse the two ranked lists. Reciprocal Rank Fusion (RRF) is the cheapest-and-good-enough default — it doesn’t need score calibration between the two retrievers, which is the main reason naive score-weighted fusion fails.

from collections import defaultdict

def rrf_fuse(

rankings: list[list[str]], # each inner list is chunk_ids in rank order

k: int = 60,

) -> list[tuple[str, float]]:

scores: dict[str, float] = defaultdict(float)

for ranking in rankings:

for rank, doc_id in enumerate(ranking):

scores[doc_id] += 1.0 / (k + rank + 1)

return sorted(scores.items(), key=lambda x: x[1], reverse=True)

def hybrid_search(conn, query: str, query_vec: list[float], top_k: int = 50):

with conn.cursor() as cur:

cur.execute(

"""

SELECT id::text FROM chunks

WHERE tsv @@ plainto_tsquery('english', %s)

ORDER BY ts_rank(tsv, plainto_tsquery('english', %s)) DESC

LIMIT %s

""",

(query, query, top_k),

)

bm25_ids = [r[0] for r in cur.fetchall()]

cur.execute(

"SELECT id::text FROM chunks ORDER BY embedding <=> %s::vector LIMIT %s",

(query_vec, top_k),

)

vec_ids = [r[0] for r in cur.fetchall()]

return rrf_fuse([bm25_ids, vec_ids])Over-retrieve at this stage. Pull 50–100 candidates per retriever even if you only plan to send 5 chunks to the LLM. The reranker in the next step depends on having a diverse candidate pool.

Reranking: Pay for Precision Where It Counts

Bi-encoder embeddings (what the vector DB uses) are fast because the query and document are embedded independently. That independence is also why they miss nuance — they can’t “read” the query and document together.

A cross-encoder reranker reads the query and each candidate jointly and produces a relevance score. It’s 10–100x slower per pair than a bi-encoder comparison, which is why you only run it on the top-N fused candidates, not the whole corpus.

Two realistic options:

- Cohere

rerank-v3— hosted API, very strong quality, costs per 1K documents. bge-reranker-large/bge-reranker-v2-m3— open-weight, self-hostable on a single GPU.

from sentence_transformers import CrossEncoder

reranker = CrossEncoder("BAAI/bge-reranker-large", max_length=512)

def rerank(query: str, candidates: list[tuple[str, str]], top_n: int = 5):

"""candidates: list of (chunk_id, chunk_text)."""

pairs = [(query, text) for _, text in candidates]

scores = reranker.predict(pairs)

ranked = sorted(zip(candidates, scores), key=lambda x: x[1], reverse=True)

return [(cid, text, float(score)) for (cid, text), score in ranked[:top_n]]When rerankers are worth it

- Queries are short and ambiguous. Bi-encoders struggle most here; rerankers help most.

- Context budget is tight. When you can only afford 3–5 chunks in the prompt, precision on those 3–5 matters more than broad recall.

- Answer quality is correlated with citation quality. Grounded answers need high-precision retrieval.

When they’re not

- Tight latency budgets. Adding a reranker adds 100–500ms even on GPU. If you’re under 300ms end-to-end, skip it or move it behind a cache.

- Very long queries. When queries themselves are paragraphs, bi-encoders close most of the gap.

Measure, don’t assume. A reranker that costs 200ms and moves answer faithfulness from 0.72 to 0.78 on your eval set is a good trade. The same reranker that moves it from 0.88 to 0.89 is not.

Prompt Design for Citations and Grounding

A RAG answer without citations is indistinguishable from a hallucination. Users cannot verify, operators cannot debug, and legal cannot defend. Build citations in from day one.

The prompt pattern that works:

SYSTEM = """You are a helpful assistant that answers questions using only the

provided sources. Every factual claim MUST be followed by a citation in the

form [S#], where # is the source index. If the sources do not contain the

answer, say so explicitly. Do not use prior knowledge. Do not invent sources."""

def build_prompt(query: str, chunks: list[dict]) -> list[dict]:

sources = "\n\n".join(

f"[S{i+1}] ({c['doc_id']} — {' > '.join(c['heading_trail'])})\n{c['text']}"

for i, c in enumerate(chunks)

)

user = f"Question: {query}\n\nSources:\n{sources}\n\nAnswer with citations:"

return [{"role": "system", "content": SYSTEM},

{"role": "user", "content": user}]Four design choices that matter:

- Number the sources in the prompt.

[S1],[S2]. The model writes these back into the answer. Post-processing resolves them to doc URLs. - Include the heading trail and doc_id with each chunk. The model uses them to disambiguate similar chunks and writes better citations.

- Explicit “say so” clause for missing answers. Without it, models guess. With it, they still occasionally guess, but far less.

- Order chunks by rerank score, best first. Models attend to the start of their context window more than the middle — put your strongest evidence at both ends if you have the budget.

After the LLM responds, parse [S#] references and attach the actual source metadata. Reject answers whose claims have no attached citation — this alone prevents a whole class of silent hallucinations.

Evaluation: The Part Everyone Skips

Without eval, every “improvement” you ship is vibes. RAG has the benefit that answers are grounded in retrieved text, which means you can mechanically check a lot — more than with open-ended generation.

What to measure

At minimum, three numbers:

- Context recall — of the facts needed to answer the question, what fraction appears in the retrieved chunks? Measures retrieval.

- Answer relevance — does the answer actually address the question? Catches tangents.

- Faithfulness (groundedness) — is every claim in the answer supported by the retrieved context? Catches hallucination.

Ragas and TruLens implement all three out of the box using LLM-as-judge. You can also write your own rubric — LLM-as-judge is often fine for the first two, but faithfulness benefits from a stricter, claim-by-claim verifier.

# Minimal faithfulness check: split the answer into claims and ask

# the judge whether each is supported by the retrieved context.

from openai import OpenAI

client = OpenAI()

CLAIM_PROMPT = """Split the answer into atomic factual claims.

Return a JSON array of strings. Ignore hedges and meta-statements."""

VERIFY_PROMPT = """Given CONTEXT and CLAIM, answer SUPPORTED or NOT_SUPPORTED.

A claim is SUPPORTED only if a careful reader could justify it from CONTEXT alone."""

def faithfulness(answer: str, context: str) -> float:

claims = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "system", "content": CLAIM_PROMPT},

{"role": "user", "content": answer}],

response_format={"type": "json_object"},

)

import json

parsed = json.loads(claims.choices[0].message.content)

claim_list: list[str] = parsed.get("claims", [])

if not claim_list:

return 1.0

supported = 0

for c in claim_list:

v = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "system", "content": VERIFY_PROMPT},

{"role": "user", "content": f"CONTEXT:\n{context}\n\nCLAIM: {c}"}],

)

if "SUPPORTED" in v.choices[0].message.content.upper().split("NOT")[0]:

supported += 1

return supported / len(claim_list)Build a gold set early

Twenty hand-labeled question/answer pairs with expected source documents are worth more than any published benchmark. Every feature change is re-run against this set. Growth to 100–300 examples covers enough edge cases to make the numbers stable.

Split the set: a frozen portion you rarely re-examine (to detect silent regressions) and a working portion you use daily for tuning. The same trap as ML in general — if you look at a holdout too often, you’ve trained on it.

Offline is not enough

Log production queries with retrieval scores, rerank scores, the chunks you sent, and the final answer. Sample a percentage for human review or judge review. Production distribution drifts from your gold set in ways you cannot predict; the only defense is to watch the real traffic.

Production Concerns

Caching

Three tiers, each with different invalidation semantics:

- Embedding cache by content hash. Re-indexing a document whose content didn’t change costs zero API calls.

- Retrieval cache by (normalized query, filter set). Short TTL (minutes). Eliminates redundant searches for the same question repeated across a team.

- Answer cache by (query hash, retrieved chunk IDs, prompt version). Tricky — must invalidate when the source changes. Use the retrieved chunk IDs as part of the key so an updated index naturally bypasses stale answers.

Updates to source documents

The wrong approach: delete the document, re-embed from scratch, insert. You lose URL stability, break referential integrity with logged queries, and pay full embedding cost.

The right approach: chunk the new version, compute content hashes, diff against existing chunks by hash, and only embed the new or changed ones. Delete chunks whose hashes no longer appear. Most document updates touch a small fraction of chunks, and the embedding bill drops accordingly.

def reindex_document(conn, doc_id: str, new_chunks: list[Chunk]):

new_hashes = {content_hash(c.text, "text-embedding-3-small"): c for c in new_chunks}

with conn.cursor() as cur:

cur.execute("SELECT content_hash FROM chunks WHERE doc_id = %s", (doc_id,))

existing = {r[0] for r in cur.fetchall()}

to_delete = existing - new_hashes.keys()

to_insert = [c for h, c in new_hashes.items() if h not in existing]

if to_delete:

cur.execute(

"DELETE FROM chunks WHERE doc_id = %s AND content_hash = ANY(%s)",

(doc_id, list(to_delete)),

)

if to_insert:

vectors = embed_batch([c.text for c in to_insert])

for c, v in zip(to_insert, vectors):

cur.execute(

"""

INSERT INTO chunks (doc_id, text, heading_trail, content_hash, embedding)

VALUES (%s, %s, %s, %s, %s)

""",

(c.doc_id, c.text, c.heading_trail,

content_hash(c.text, "text-embedding-3-small"), v),

)

conn.commit()Freshness

If your corpus changes during the day, retrieval can serve stale chunks for minutes after a write. Options in order of cost:

- Accept it. Most knowledge bases tolerate 5-minute staleness.

- Dual-read: query the index and recent write-ahead log, merge.

- Real-time indexing pipeline with per-document versioning. Expensive; only worth it for genuinely low-tolerance domains (ops, incident response).

PII and redaction

If your docs contain PII or secrets, redact before embedding. Embeddings leak information; a vector of someone’s SSN is not a safe storage format. Run a redactor (presidio, a regex pass for obvious patterns, or a small classifier) in the ingestion pipeline and store redacted text plus a pointer to the original for authorized users.

Cost

The quiet cost killers, in order of how often I see them:

- Re-embedding everything on every index rebuild. Cache by content hash.

- Embedding at

largedimensions whensmallwas fine. Evaluate; don’t assume bigger wins. - Reranking everything, including trivially obvious top-1 hits. Skip the reranker when the top bi-encoder hit has a very high score.

- Sending 20 chunks when 5 answer the question. Longer prompts cost more and usually produce worse answers due to middle-of-context attention drop.

Architecture for a Production RAG Service

flowchart TB

subgraph Ingest[Ingestion]

S1[Source connectors<br/>S3 / GDrive / CMS] --> S2[Parse + OCR<br/>worker pool]

S2 --> S3[Chunker]

S3 --> S4[Redactor]

S4 --> S5[Embedding<br/>batch worker]

S5 --> DB[(Postgres<br/>pgvector + tsvector)]

end

subgraph API[Query service]

U[Client] --> GW[API gateway]

GW --> QS[Query service]

QS --> QR[Query rewriter<br/>optional]

QR --> HR[Hybrid retriever<br/>BM25 + vector]

HR --> DB

HR --> RR[Reranker]

RR --> LL[LLM client]

LL --> CV[Citation validator]

CV --> GW

end

subgraph Obs[Observability]

QS -.logs.-> TEL[OTLP collector]

TEL --> TR[Traces + metrics]

CV -.samples.-> EV[Eval harness]

EV --> DASH[Quality dashboard]

endSeparation of concerns that matters in practice:

- Query service is stateless. Scales horizontally. The DB is the only shared state.

- Reranker is its own service or a sidecar. Different GPU requirements, different scaling curve, and sometimes a different vendor.

- Embedding worker is separate from the query service. You do not want an ingestion spike starving user queries of CPU.

- Citation validator runs on the critical path. An unvalidated answer is not shipped. It’s cheap and it catches a specific class of hallucination that eval harnesses sometimes miss.

A Node.js API endpoint

Most teams I work with run the retrieval stack in Python and expose it to the rest of the product over a typed API. A thin TypeScript wrapper in front of the Python service keeps the product codebase simple and lets frontend engineers stay in the language they know.

// apps/api/src/routes/rag.ts

import type { Request, Response } from "express";

import { z } from "zod";

const RagRequest = z.object({

query: z.string().min(1).max(2000),

filters: z.record(z.string(), z.unknown()).optional(),

top_k: z.number().int().min(1).max(20).default(5),

});

interface RagClient {

answer(input: z.infer<typeof RagRequest>): Promise<{

answer: string;

citations: { id: string; doc_id: string; url: string; snippet: string }[];

retrieval_ms: number;

rerank_ms: number;

llm_ms: number;

}>;

}

export function createRagHandler(client: RagClient) {

return async (req: Request, res: Response) => {

const parsed = RagRequest.safeParse(req.body);

if (!parsed.success) {

return res.status(400).json({ error: parsed.error.flatten() });

}

const started = Date.now();

try {

const result = await client.answer(parsed.data);

res.setHeader("x-rag-retrieval-ms", result.retrieval_ms);

res.setHeader("x-rag-rerank-ms", result.rerank_ms);

res.setHeader("x-rag-llm-ms", result.llm_ms);

res.setHeader("x-rag-total-ms", Date.now() - started);

res.json({

answer: result.answer,

citations: result.citations,

});

} catch (err) {

req.log.error({ err, query: parsed.data.query }, "rag.answer failed");

res.status(502).json({ error: "retrieval_service_unavailable" });

}

};

}The endpoint is deliberately boring. The smart code lives in the Python service. The TypeScript side validates input, passes through, surfaces timing headers for tracing, and returns a stable response shape to clients.

Closing Checklist

Before shipping a RAG system to real users, go through this list end to end. Every item maps to a failure I’ve watched teams (including mine) hit.

- Ingestion handles PDFs with OCR fallback, not just text extraction.

- Tables and code blocks survive chunking intact.

- Near-duplicates are deduplicated before indexing.

- Chunking is document-structure-aware where structure exists.

- Embeddings are cached by content hash; re-indexing is incremental.

- Hybrid retrieval (BM25 + vector, fused with RRF) is the default, not a “future improvement.”

- A reranker is in place, with latency budget measured and justified.

- Prompts enforce citations; a validator rejects uncited claims.

- Gold eval set of at least 50 examples; CI runs it on every prompt or retrieval change.

- Production queries are logged with retrieval metadata; a sample is judged weekly.

- PII is redacted before embedding, not after.

- Source-document updates use hash-diffing, not full re-index.

- Dashboards show retrieval latency, rerank latency, LLM latency, faithfulness score, and cost per query.

Further Reading

- Dense Passage Retrieval — Karpukhin et al., 2020. Foundational paper for bi-encoder retrieval.

- ColBERT — Khattab and Zaharia, 2020. Late-interaction retrieval, a middle ground between bi-encoder and cross-encoder.

- Lost in the Middle — Liu et al., 2023. Why long contexts under-perform and what to do about it.

- The Ragas and TruLens project docs — the most practical entry points for RAG evaluation.

A RAG system that works in production is an exercise in doing many boring things well. Parse carefully, chunk thoughtfully, retrieve with both keywords and semantics, rerank when it earns its latency, cite everything, and measure continuously. None of these steps is glamorous, and all of them matter more than whichever model is on top of the leaderboard this quarter.