The ML Production Gap

There’s a well-documented gap between “model works in a notebook” and “model serves predictions reliably in production.” I’ve seen data scientists train excellent models that never make it to production because the engineering infrastructure wasn’t there. Conversely, I’ve seen engineers build beautiful serving infrastructure for models that perform poorly because they weren’t validated properly.

Bridging this gap requires both ML understanding and production engineering skills. FastAPI hits the sweet spot: it’s Python (so data scientists can read and contribute), it’s fast (built on Starlette and Uvicorn with async support), and it has built-in validation and documentation (via Pydantic and OpenAPI).

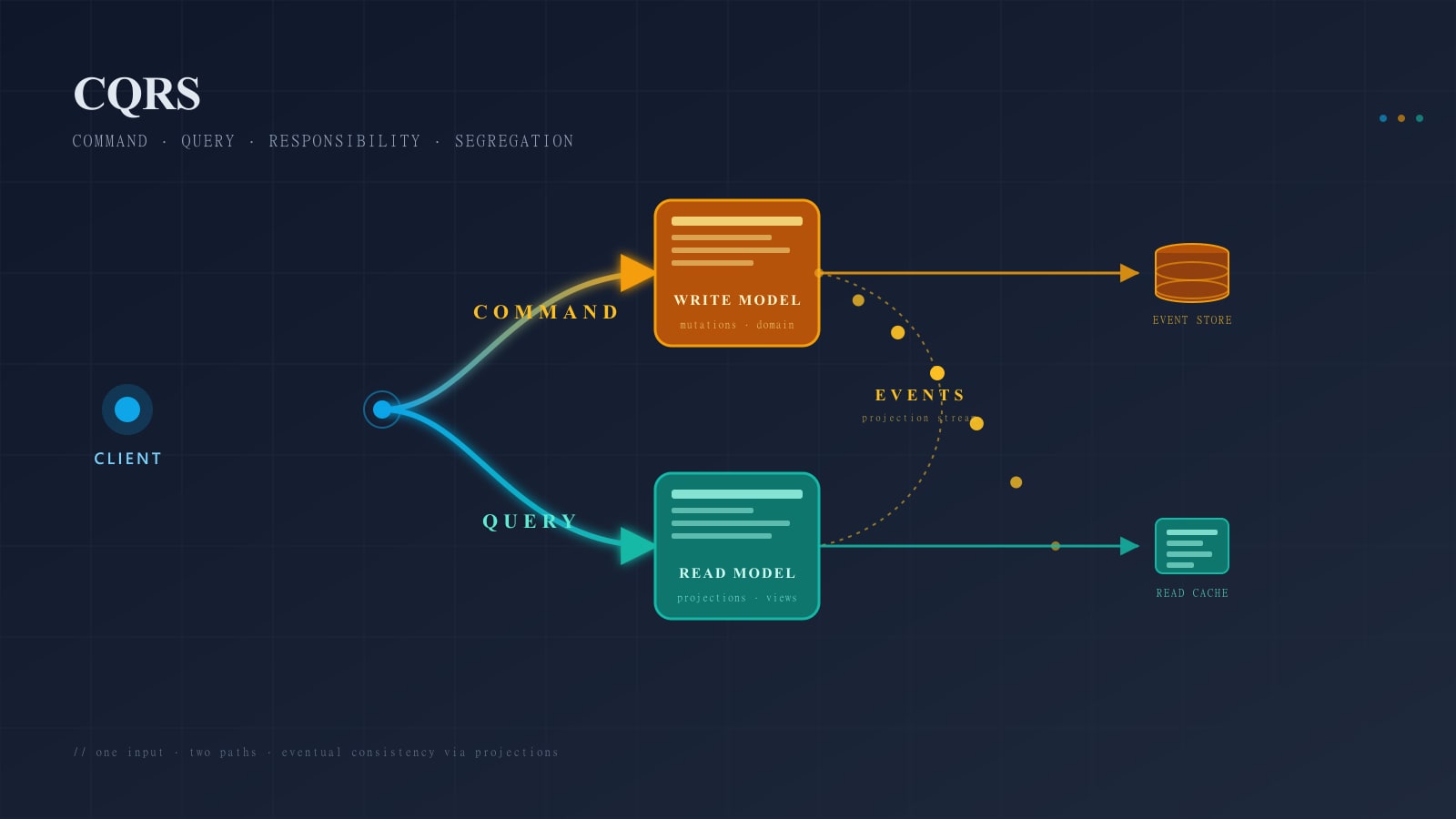

Architecture Overview

A production ML serving system has five layers: the API layer (receives requests, validates input, returns predictions), the model layer (loads and manages model artifacts, runs inference), the preprocessing layer (transforms raw input into model-ready features), the monitoring layer (tracks prediction distributions, latency, and model drift), and the infrastructure layer (containerization, scaling, health checks).

FastAPI handles the API layer natively. The other layers are patterns you build on top.

Input Validation with Pydantic

The most common production ML bug is malformed input. A model trained on features in a specific range will produce garbage predictions when given inputs outside that range. Pydantic models catch this before inference.

Define your input schema with strict types, value ranges, and custom validators. A house price prediction model might require square footage between 100 and 50,000, bedrooms between 0 and 20, and a valid zip code format. If any validation fails, the API returns a clear 422 error instead of a nonsensical prediction.

This is the first line of defense against data quality issues. The model should never see invalid input.

Model Loading Patterns

Singleton Pattern

Load your model once at application startup, not per-request. Model loading (especially for large neural networks) can take seconds or minutes. Storing the loaded model as a module-level variable or using FastAPI’s lifespan events ensures it’s ready when the first request arrives.

Model Versioning

Serve multiple model versions simultaneously. Your API should support a version parameter that routes to the correct model. This enables A/B testing, gradual rollouts, and instant rollback.

Store model artifacts with version metadata: the model file, training parameters, feature schema, and performance metrics from evaluation. When something goes wrong in production, you need to trace back to exactly which model is misbehaving and what it was trained on.

Graceful Model Updates

Updating a model shouldn’t require downtime. Load the new model in a background thread, validate it against a test dataset, and swap it atomically. The old model continues serving until the new one is ready. If validation fails, the old model keeps running and you get an alert.

Preprocessing Pipeline

Feature preprocessing must be identical between training and serving. This is the most frequent source of training-serving skew. If your training pipeline normalizes features using the training set’s mean and standard deviation, the serving pipeline must use those exact same values — not recompute them from the inference input.

Serialize your preprocessing pipeline alongside your model. Scikit-learn pipelines, custom transformers, and feature scalers should all be saved and loaded together. A prediction request flows through the exact same transformations that the training data went through.

Feature Validation

Beyond input validation, validate that computed features fall within expected ranges. If a feature that was always between 0 and 1 during training suddenly shows values above 10 in production, something is wrong — either the input is unusual or the preprocessing has a bug. Log these anomalies even if you still return a prediction, because they’re early warnings of data drift.

Async Inference for Throughput

FastAPI’s async support lets you handle concurrent requests efficiently. For CPU-bound inference (most traditional ML models), use a thread pool executor to prevent blocking the event loop. For GPU inference (deep learning models), batch requests together and run inference on the batch for better GPU utilization.

A common pattern for high-throughput systems: requests arrive individually, get added to a batch queue, and a background worker runs inference on full batches at a regular interval. Each request awaits its individual result. This amortizes the per-inference overhead across multiple predictions.

Health Checks and Readiness

Implement three health check endpoints: a liveness endpoint (is the process running), a readiness endpoint (is the model loaded and ready to serve), and a startup endpoint (for slow-starting models that need time to load weights).

Kubernetes uses these endpoints to manage pod lifecycle. A pod that’s alive but not ready won’t receive traffic. A pod that fails liveness checks gets restarted. This prevents traffic from reaching pods that are still loading models or have entered a bad state.

Monitoring Predictions

Distribution Monitoring

Track the distribution of predictions over time. If your house price model suddenly starts predicting values 3x higher than usual, something has changed — either the input data shifted or the model is stale. Set alerts for distribution shifts using statistical tests or simple threshold checks on prediction percentiles.

Feature Drift Detection

Compare the distribution of incoming features against the training data distribution. Significant drift indicates that the real world has changed in ways the model wasn’t trained for. This is the earliest warning sign that model performance is degrading.

Latency and Error Tracking

Track inference latency (P50, P95, P99) separately from total request latency. This tells you whether slowness comes from the model, preprocessing, or API overhead. Track error rates by error type: validation errors, preprocessing failures, inference exceptions, and timeouts.

Scaling Considerations

Horizontal Scaling

Stateless API servers scale horizontally — just add more replicas. The model is loaded into each replica’s memory. For a 500MB model with 10 replicas, that’s 5GB of total memory. Factor this into your capacity planning.

GPU Sharing

If you’re using GPU inference, GPU memory is your bottleneck. Multiple models can share a single GPU using frameworks like NVIDIA Triton Inference Server, or you can run multiple small model servers on a single GPU. Profile your GPU memory usage before committing to an architecture.

Caching Predictions

For models with deterministic outputs (same input always produces same prediction), cache predictions aggressively. A Redis cache keyed on a hash of the input features can eliminate redundant inference calls entirely. For a recommendation system that serves repeated users, caching can reduce inference load by 60-80%.

Testing ML Services

Test at every layer: unit tests for preprocessing functions, integration tests for the full prediction pipeline (input to output), contract tests for API compatibility, and load tests for performance under production-like traffic.

Include edge case tests: what happens with minimum valid input, maximum valid input, missing optional fields, and unexpected feature combinations. ML models can produce surprising outputs for inputs near the boundary of their training distribution.

Lessons Learned

Start simple: a single FastAPI service with one model, basic validation, and structured logging. Add complexity only when you need it. I’ve seen teams build elaborate ML platforms before they had their first model in production. Ship the prediction, then iterate on the infrastructure.